Beyond the Context Window: Mastering Agent Memory through Passive Context and Compressed Indexing

Skills Are Broken: Why AI Agents Need Passive Context, Not Better Prompts. Vercel's counterintuitive discovery that 8KB of compressed knowledge outperforms 40KB of documentation

Introduction

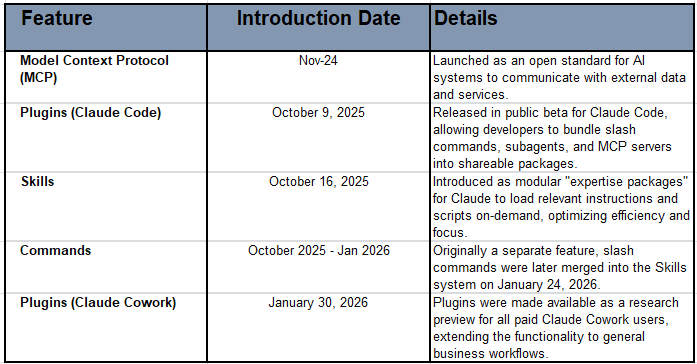

I have been blown away by the recent Enterprise grade releases, especially in the past 3 months or so. We’re witnessing an explosion of new capabilities. Of course many things preceeded it: Anthropic’s introduction of Skills and Plugins in 2024/25 (and updates via Skills and Plugins via CoWork in 2026), the Model Context Protocol (MCP) enabling agents to access external tools, and slash commands giving users direct control over agent behavior etc. These innovations promise to transform how AI agents access and utilize information. This week, more so for me. Personally, I relate to their strengths and operational capabilities (not only because they work! but) because quite simply - Strategic Modularity applied in the “Right Context”, makes sense!! My theme for project work done in the past.

Of couse, inspite of this exciting progress, a fundamental question remains unanswered: How do we ensure AI agents reliably use the right information at the right time? Note: You can tell that I have, off late, and will continue to give a lot of emphasis to the topic of “memory management” in my writings. I will have a lot more to share in coming posts. Now back to this one.

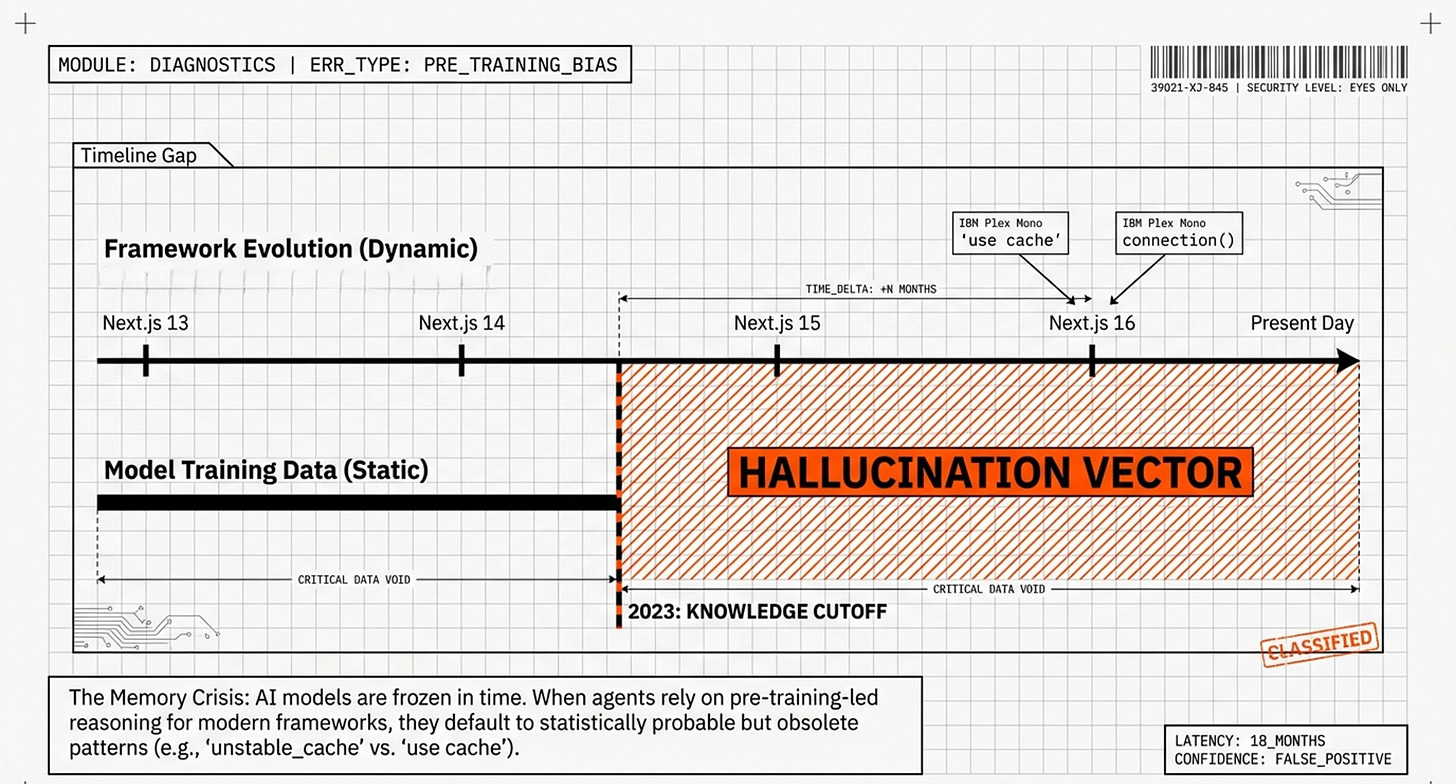

Timelines of recent capabilities with Anthropic’s Claude Models/Agents:

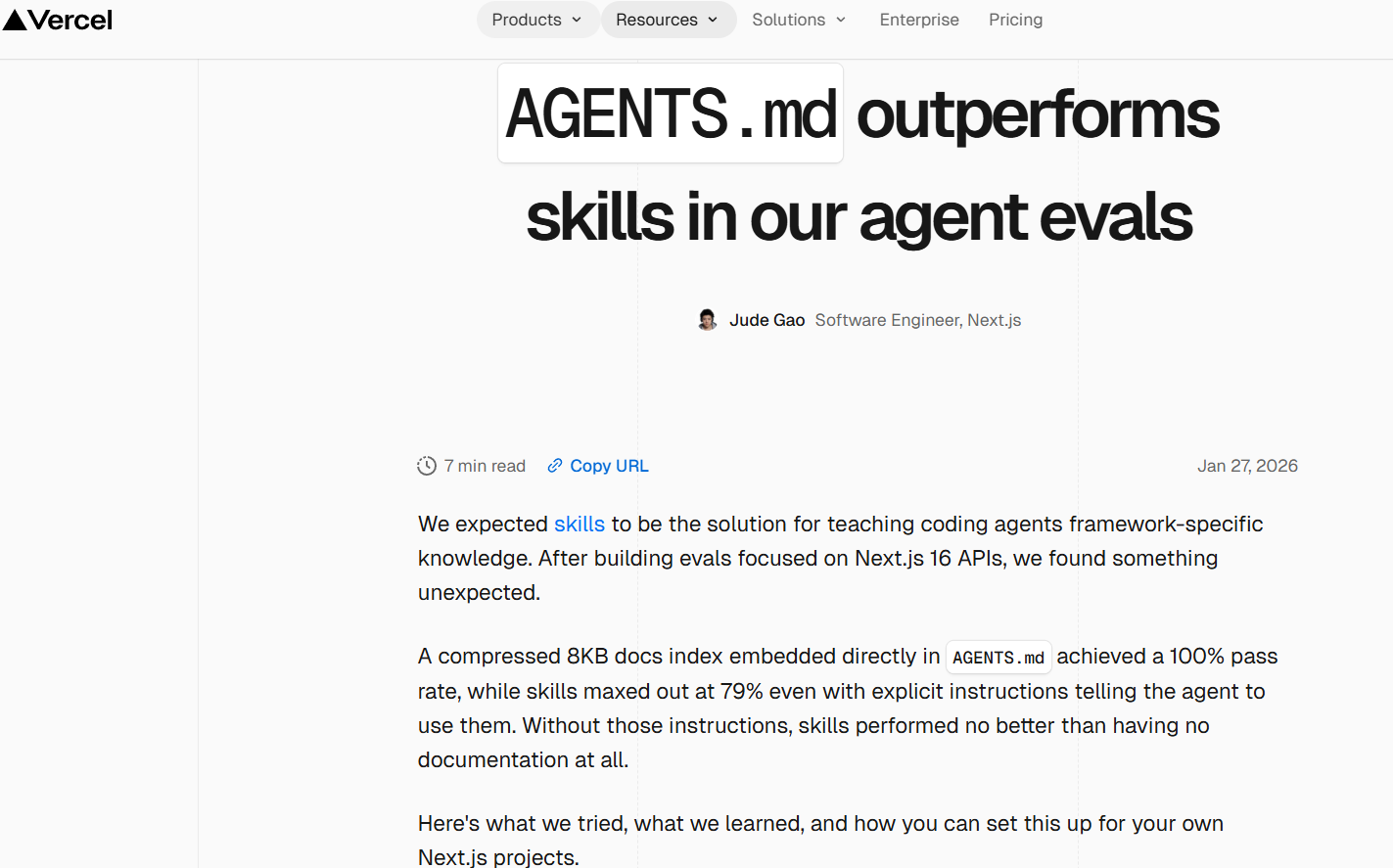

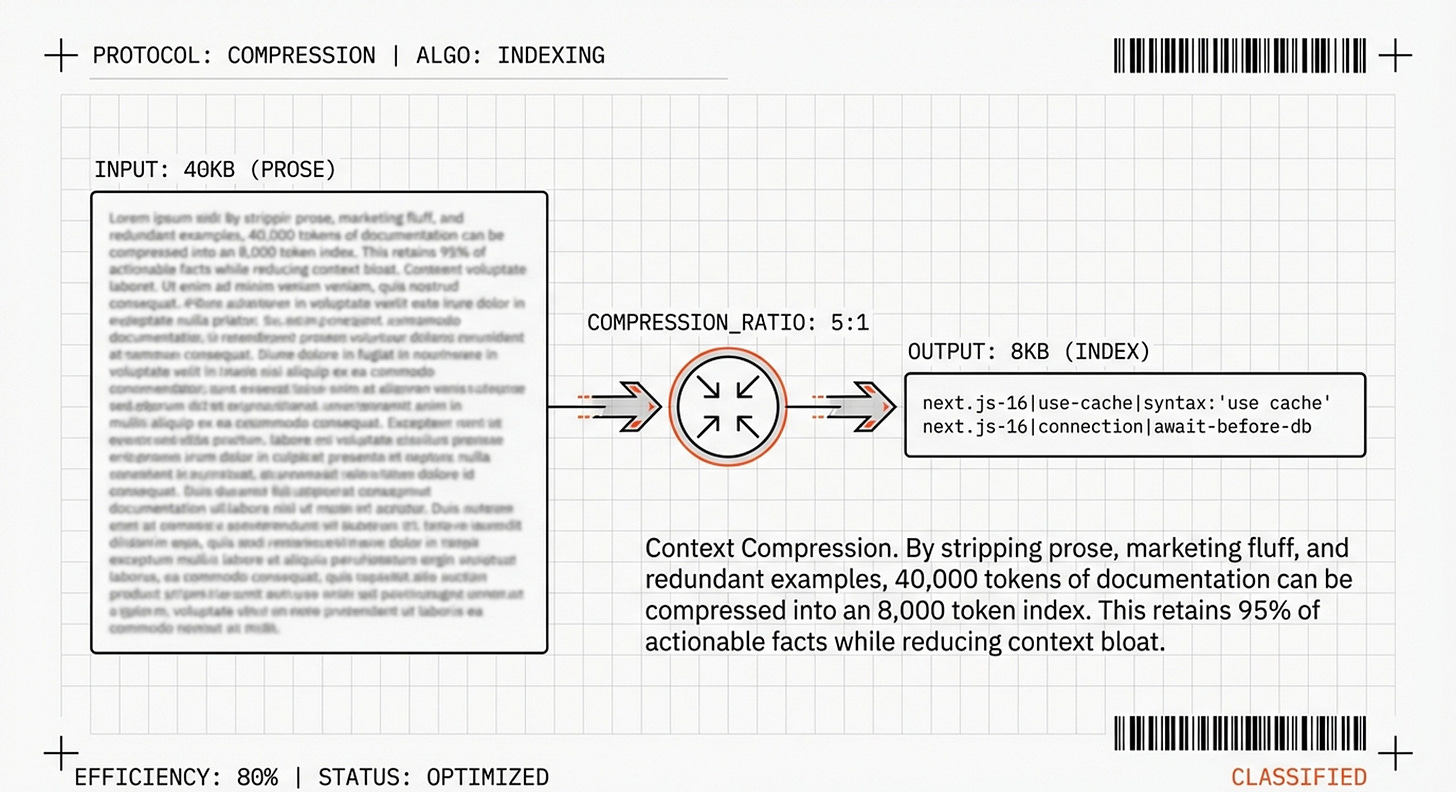

This article examines groundbreaking research from Vercel’s engineering team that challenges conventional wisdom about agent memory architecture - AGENTS.md Outperforms Skills.md. Their findings reveal a counterintuitive truth: the path to 100% reliability isn’t more sophisticated retrieval systems—it’s eliminating retrieval decisions. Through rigorous benchmark testing, Vercel discovered that a simple 8KB compressed index outperforms elaborate 40KB Skills-based systems, not by being smarter, but by being always present. Also note a postnote addition, of the recently released InfMem paper - included here because I want to make clear our focus and distinction on Static version specific facts vs Dynamic task specific evidence.

What you’ll learn, and that which I have found to be very useful:

Why traditional “Skills” fail 47-50% of the time, even with perfect documentation

The cognitive science behind passive context versus active retrieval

How to compress 40KB of framework documentation into an 8KB high-density index

A practical implementation guide using pipe-delimited indexing

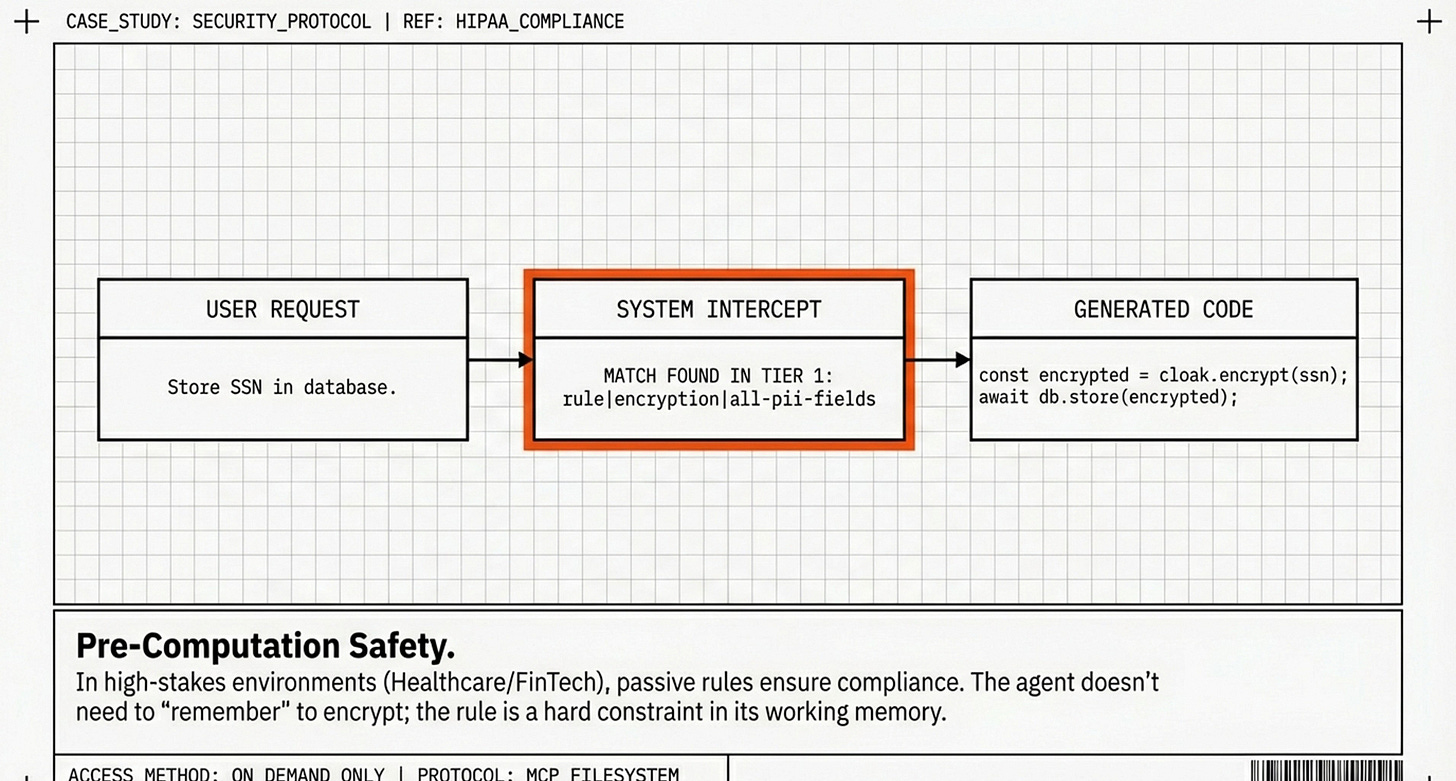

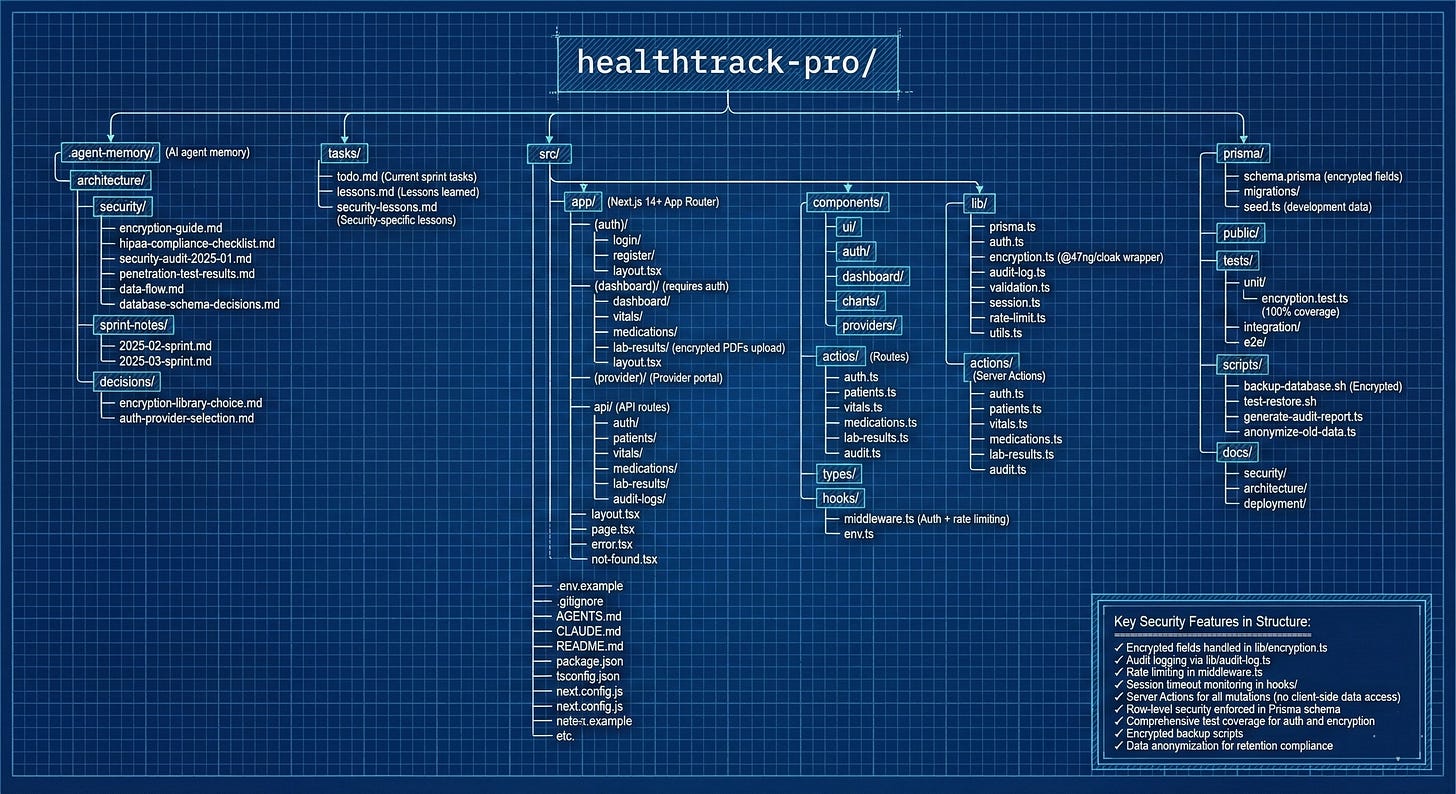

Real-world examples, including HIPAA-compliant healthcare applications

Best practices for building reliable AI coding agents

Static version specific facts vs Dynamic task specific evidence

Whether you’re a developer frustrated by inconsistent AI behavior, a team lead evaluating agent architectures, or simply curious about how modern AI systems manage knowledge, this deep dive into Vercel’s research offers actionable insights that apply far beyond coding agents—to any domain where AI must reliably access evolving information. These evolutions (for many) pale in comparison to the recent hype around OpenClaw/Moltbolt/Clawdbot, but I prefer the alignment measures applied by Anthropic.

Let’s begin with the problem that sparked this breakthrough.

The Memory Crisis

Imagine hiring a brilliant software engineer who knows every programming concept but can’t remember which version of your web framework you’re using. They confidently suggest outdated code patterns from 2023 when you’re running 2025 software. Yes how many of us have been stuck with versioning issues…

This is the reality of AI coding agents today.

Despite massive advances in AI capabilities, it looks like we may have been solving the wrong problem. We’ve treated agent memory like a filing cabinet—creating elaborate “Skills” folders that agents must remember to check—when what we needed was a Post-it note stuck to their monitor: information that’s just there, impossible to miss.

Recent research from Vercel, the company behind Next.js, reveals a stunning breakthrough/finding: that the most effective information is information the agent doesn’t have to decide to retrieve. By eliminating decision-making and using compressed indexing, they achieved something remarkable: AI agents went from 53% accuracy to 100% accuracy on coding tasks.

This article explains how—and why it matters for anyone building with AI.

Understanding the Problem

What Are Frameworks and Why Do They Break AI?

In software development, a framework is like the frame of a house—it provides structure so developers don’t build everything from scratch. Next.js is a popular framework for building modern websites. Like most frameworks, it evolves rapidly: version 13, version 14, version 15, version 16—each introducing new features and deprecating old ones.

So the problem is: AI models like Claude and GPT-4 are trained on data that freezes at a specific point in time. Every model has a knowledge cutoff—a date beyond which it knows nothing. If an AI was trained in January 2024, it knows nothing about features released in February 2024 or later.

The Real-World Consequence

Scenario: You’re building a website with Next.js 16 (released late 2024). You ask your AI assistant to implement caching.

What you expect:

async function getData() {

'use cache'; // New Next.js 16 way

return fetch('https://api.example.com/data');

}

What the AI suggests:

import { unstable_cache } from 'next/cache'; // Old Next.js 13 way

const getData = unstable_cache(async () => {

return fetch('https://api.example.com/data');

});

The AI isn’t hallucinating—it’s using the most statistically probable code from its training data. The problem? That training data is outdated. The word “unstable” in unstable_cache was literally a warning that this API might change. It did.

The Documentation Trap

You might think: “Just give the AI the latest documentation!”

Many developers try this. But modern framework documentation can be 40,000+ words. Dumping that into an AI conversation creates three problems:

Context bloat - Uses up the AI’s “working memory” before it even starts thinking about your problem

The retrieval decision problem - Even with docs available, the AI must decide to look at them

Cognitive overload - Too much information makes it harder to find relevant facts

Vercel’s research found that even with perfect, up-to-date documentation available, AI agents only checked it 50-53% of the time. Why? Because they felt confident—they had extensive training data about Next.js. They just didn’t realize it was outdated.

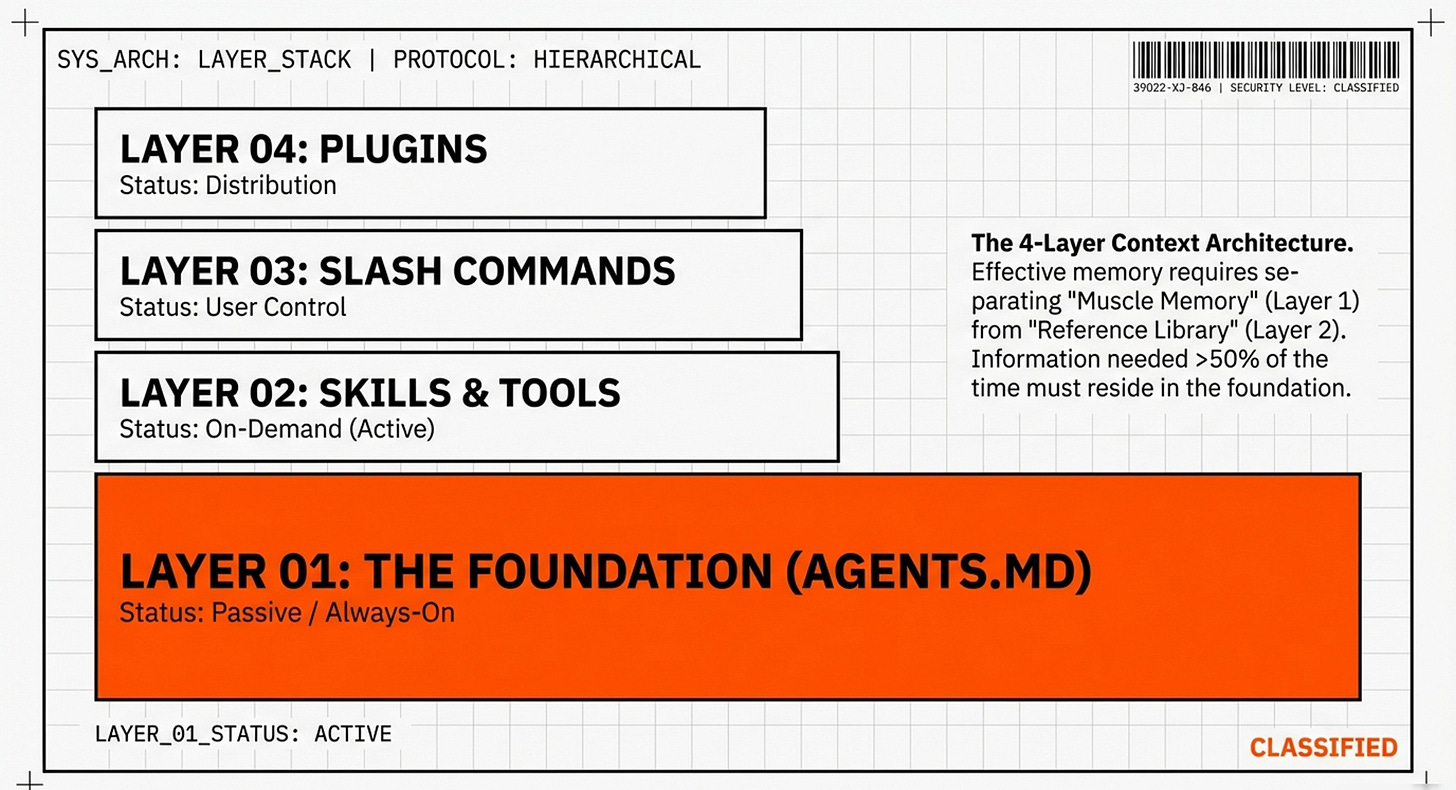

The Four Layers of AI Context

Before understanding the solution, you need to understand how modern AI agents structure knowledge. Think of it as organizing a library.

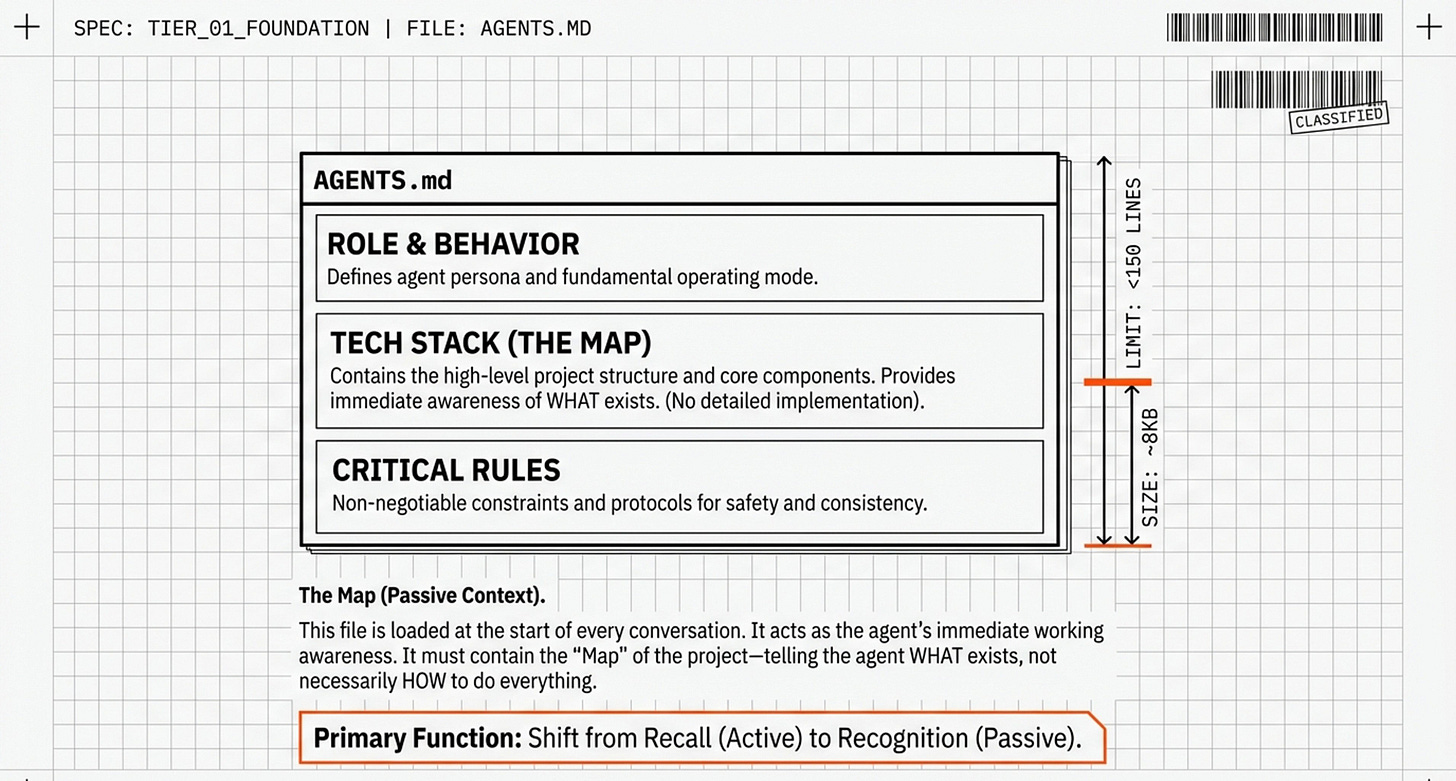

Layer 1: The Foundation (CLAUDE.md / AGENTS.md)

What it is: A file in your project folder that’s automatically loaded into every AI conversation.

Human analogy: Like knowing your own name and address—information so fundamental it’s always in your immediate awareness.

What goes here:

How the AI should communicate (tone, style)

Your project’s core technologies

Critical rules that should never be violated

File location:

your-project/

├── CLAUDE.md ← Always loaded

├── src/

└── package.json

Size limit: ~150 lines. Think of it as the AI’s “working memory”—if you overload it, important stuff gets lost.

Layer 2: Skills (The Library Shelves)

What it is: Separate folders with specialized documentation that load only when the AI decides to check them.

Human analogy: Reference books on a shelf—you pull them down when you need them.

The structure:

your-project/

├── skills/

│ ├── database-optimization/

│ │ └── SKILL.md

│ ├── api-design/

│ │ └── SKILL.md

│ └── react-patterns/

│ └── SKILL.md

The fatal flaw: The AI must decide to check these. And as we’ll see, that decision fails about half the time.

Layer 3: Slash Commands

What it is: Explicit commands you type to force specific actions.

Examples:

/search latest Next.js features- Forces web search/create-component- Triggers a workflow

Why it matters: Bypasses the AI’s decision-making entirely. You’re in control.

Layer 4: Plugins

What it is: Bundled capabilities, like apps on your phone.

Example: Claude’s Cowork plugin bundles file management, automation templates, and task workflows into one package.

Role: Distribution and packaging, not core intelligence.

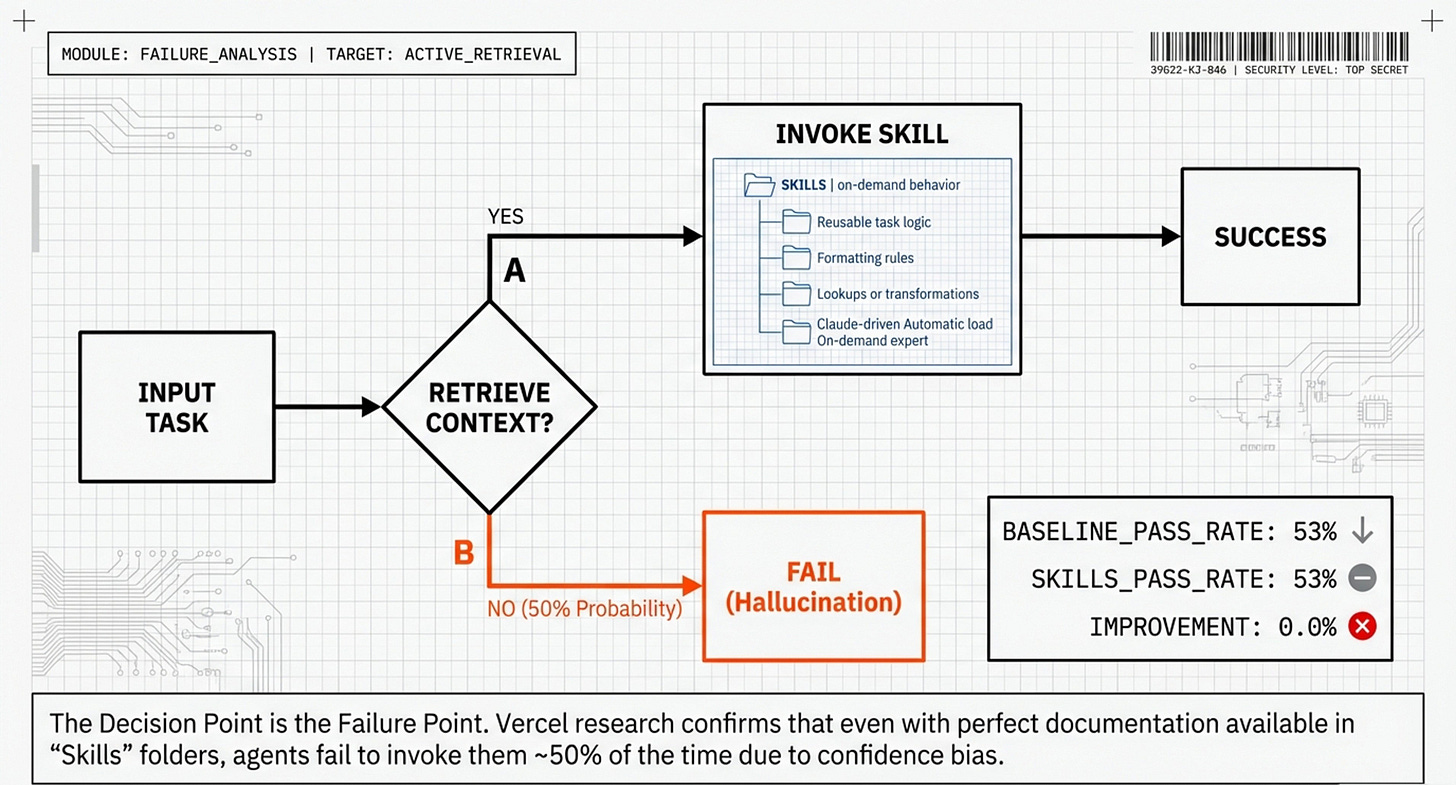

The Vercel Experiment: When Skills Fail

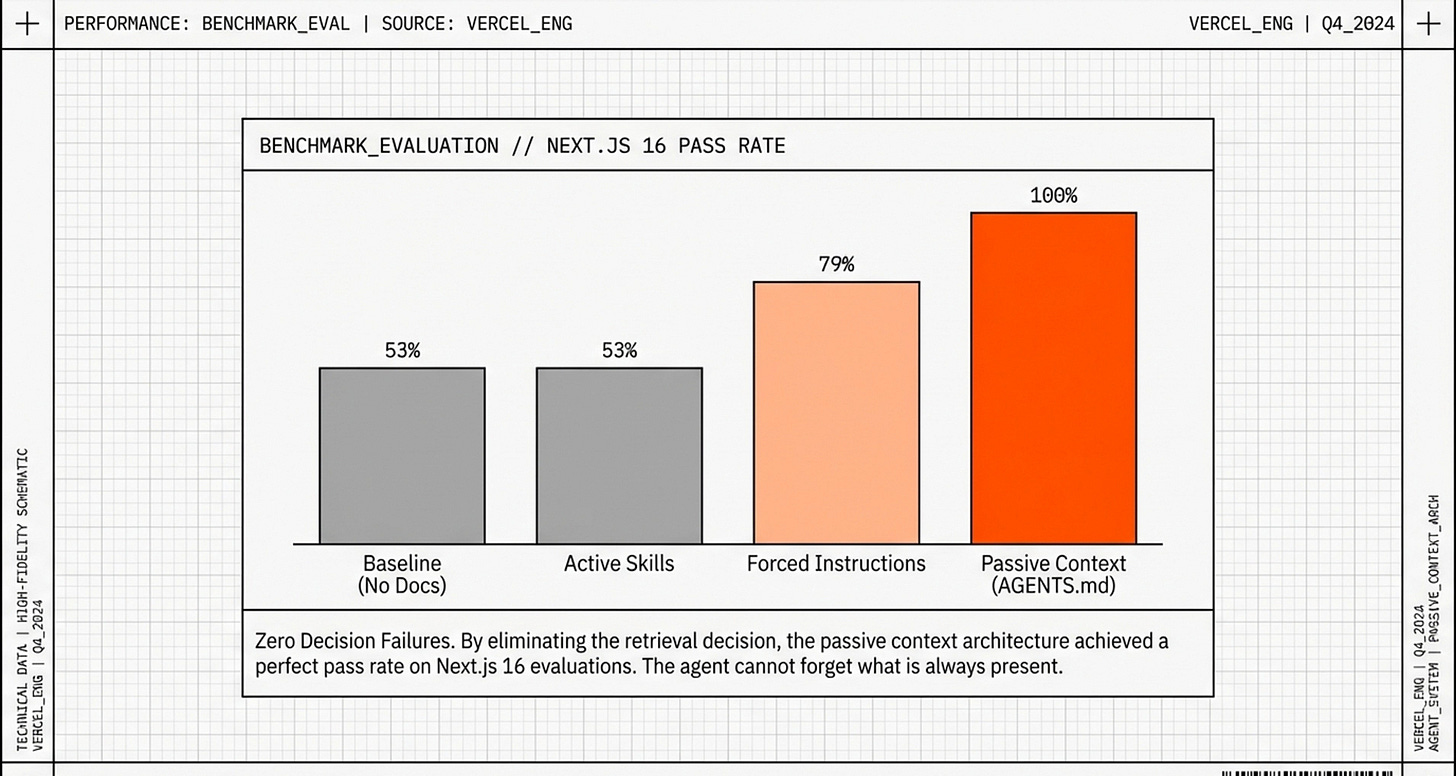

In late 2024, Vercel created a standardized test of real-world coding tasks:

Implement data caching

Create API routes

Set up database connections

Build forms with validation

They tested AI agents three different ways.

Test 1: Baseline (No Help)

Setup: AI with standard training, no extra documentation

Result: 53% success rate

The AI failed because it used outdated Next.js 13 patterns when the test required Next.js 16.

Test 2: Traditional Skills (The Shocking Result)

Setup: Perfect, comprehensive Next.js 16 documentation organized as Skills (40KB of content)

Expected result: 80-90% success

Actual result: 53% success

Zero improvement.

Analyzing the logs revealed why: the AI only checked the Skills documentation 50-53% of the time. For the other ~50%, it relied on outdated training data and failed.

Why didn’t the AI check?

→ Confidence bias: The AI had lots of Next.js training data, so it felt confident. It didn’t realize its knowledge was obsolete.

→ Pattern matching: When it saw “implement caching,” it automatically used familiar patterns from training—no conscious thought to look up documentation.

→ Cognitive economics: Checking a Skill requires extra steps. The easier path is just generating code from memory.

Test 3: Forcing with “CRITICAL” Instructions

Developers tried emphatic commands:

CRITICAL RULE: You MUST check the Next.js skill for ANY Next.js task.

NEVER use training data. ALWAYS check /skills/nextjs-16/SKILL.md.

This is MANDATORY.

Result: 60-65% success

Better, but still unreliable. The instructions competed with other directives (”be concise,” “respond quickly”), got buried in long conversations, and the AI sometimes checked the skill but then ignored what it read.

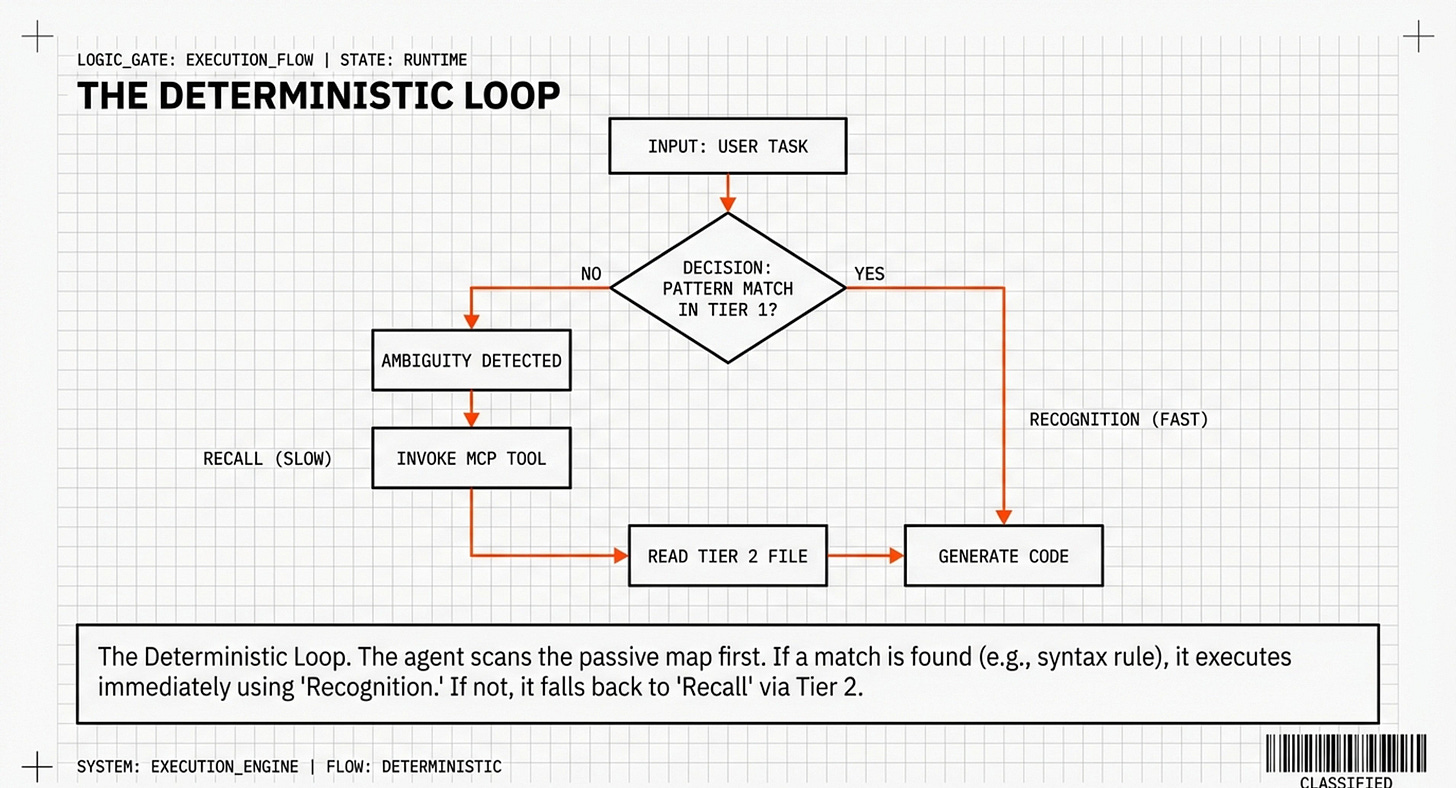

The Fundamental Insight

You can’t reliably force an AI to make the right decision every time.

No matter how emphatic your instructions, the AI still must:

Recognize a decision point exists

Evaluate whether to check external knowledge

Choose to invoke the skill

Actually apply what it learns

Each step is a failure point.

The solution? Eliminate the decision entirely.

The Breakthrough: Passive Context

Vercel’s radical idea: Stop making the agent choose. Instead of Skills that require conscious retrieval, embed knowledge directly into the agent’s “working memory”—the foundation layer that’s always loaded.

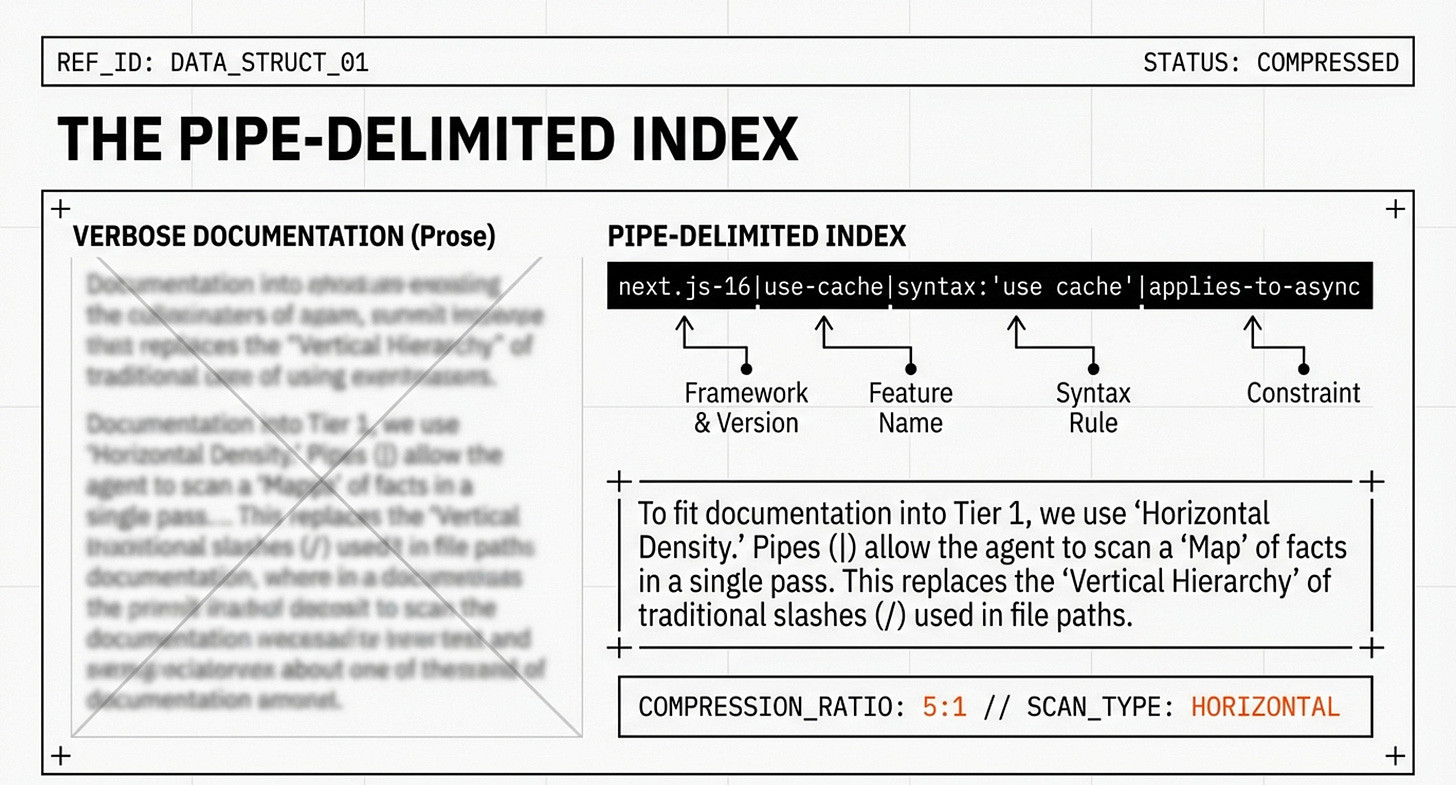

But there’s a problem: you can’t dump 40,000 words of documentation into every conversation. That creates context bloat.

The solution: compression.

How Humans Actually Use Documentation

When you look up an API in documentation, you encounter something like this:

Traditional documentation (93 words):

The

'use cache'directive is a powerful new feature in Next.js 16 that simplifies caching for async functions. To use it, simply add the string'use cache'at the top of your async function body. This directive replaces the previousunstable_cacheAPI, which was more verbose and harder to use. The'use cache'directive automatically handles cache invalidation and revalidation, making it much easier to create performant applications.

What you actually extract (15 words):

Feature:

'use cache'directiveWhere: Top of async function

Replaces:

unstable_cache

You mentally compress 93 words into 15 words of actionable information.

Vercel realized they could do this systematically for all documentation.

The Compressed Index Format

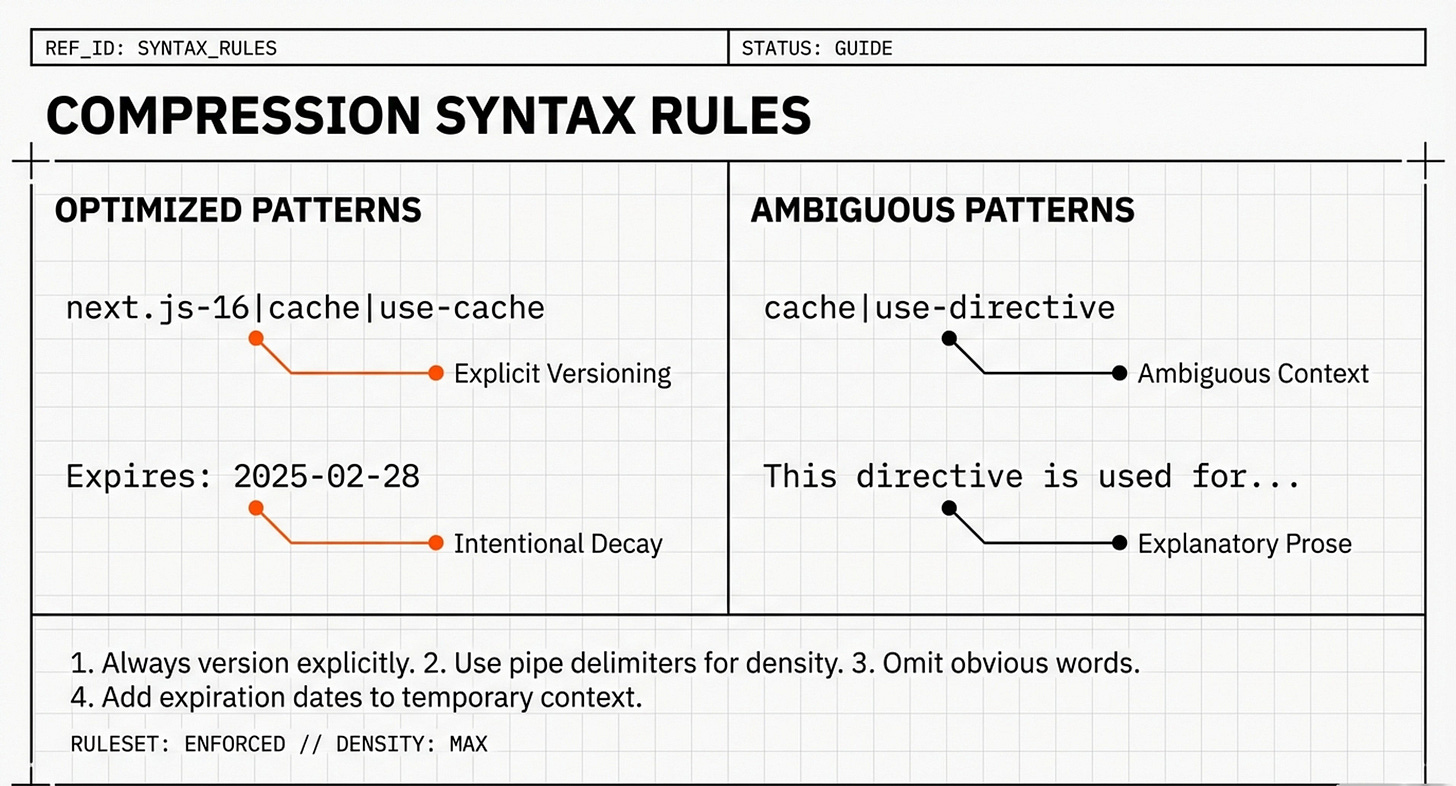

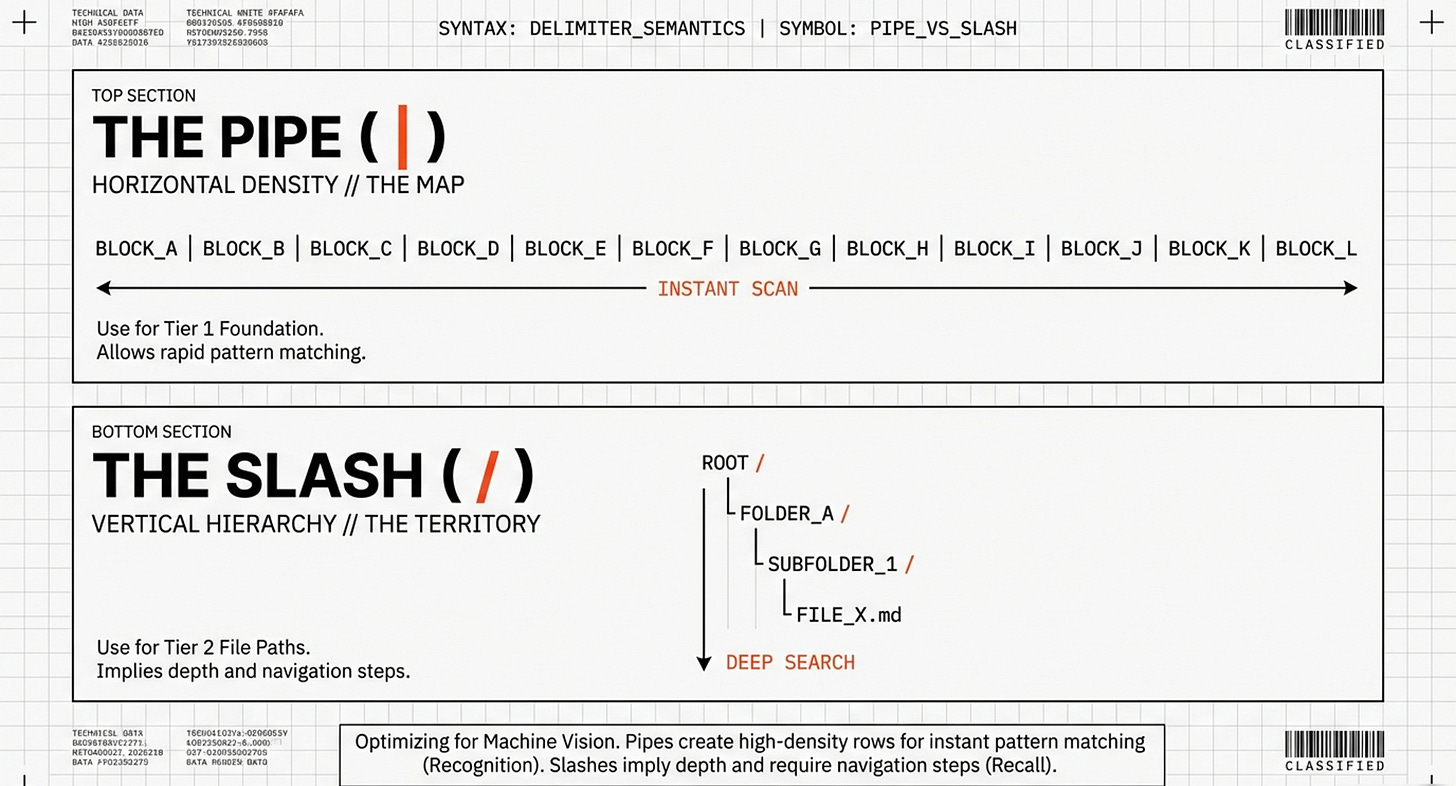

Instead of prose, they created a dense reference using pipe delimiters (|):

next.js-16|use-cache|syntax:'use cache'|applies to async functions|replaces unstable_cache

next.js-16|connection|import from 'next/server'|returns promise|await before db queries

next.js-16|server-actions|'use server' at top|can be in separate file|auto-serializes

react-19|server-components|async-components|no-hooks|fetch-directly

Breaking down one line:

next.js-16|use-cache|syntax:'use cache'|applies to async functions|replaces unstable_cache

next.js-16 = Framework and version (prevents mixing versions)

use-cache = Feature name

syntax:’use cache’ = How to write it

applies to async functions = Where it works

replaces unstable_cache = What it supersedes

Why This Works

Extreme density:

Traditional prose: ~93 words for these facts

Compressed index: ~15 words

Compression ratio: 6:1

Instant pattern matching:

AI sees in code: async function getData() {...}

AI scans index: "next.js-16|use-cache|applies to async functions"

AI recognizes: "This is async, use-cache applies"

AI applies: Adds 'use cache' directive

Version clarity:

next.js-13|cache|unstable_cache|manual invalidation

next.js-16|cache|use-cache-directive|automatic invalidation

The AI can distinguish between versions instantly.

The Complete Structure

Vercel compressed their entire 40KB documentation into ~8KB:

# AGENTS.md

## Next.js 16 API Index

### Caching

next.js-16|use-cache|syntax:'use cache'|async-functions|replaces-unstable_cache

next.js-16|cache-tags|import {cacheTag}|granular-invalidation

next.js-16|revalidate-path|import {revalidatePath}|after-mutations

### Data Fetching

next.js-16|connection|import-next/server|await-before-db

next.js-16|fetch|auto-deduped|specify-revalidate-or-no-store

### Routing

next.js-16|route-handlers|app/api/route.ts|export-GET-POST

next.js-16|dynamic-routes|[param]-syntax|access-via-params

[... ~150 total lines covering all major APIs ...]

## Architecture Patterns

pattern|data-fetch|server-components-only|pass-props-to-client

pattern|mutations|server-actions-only|revalidate-after

pattern|errors|error.tsx-at-route|must-be-client-component

Statistics:

Original: 40,000 tokens (~40KB)

Compressed: 8,000 tokens (~8KB)

Compression: 5:1 ratio

Information retained: ~95% of actionable facts

What was sacrificed:

Explanatory prose

Multiple examples

Tutorial walkthroughs

Marketing language

What was preserved:

All syntax and usage

Version-specific information

Deprecations

Critical constraints

The Results: 100% Success

With the compressed index in AGENTS.md (always loaded), Vercel re-ran their benchmark:

Approach Pass Rate Why Baseline (no help) 53% Outdated training data Skills (40KB docs) 53% AI didn’t check 47-50% of time Forced instructions 60-65% Unreliable, inconsistent Compressed index (8KB) 100% No decision needed

Why Literally Perfect Performance?

1. Zero decision failures

Old way (Skills):

Task → Should I check skill? → 50% said NO → Fail

New way (Passive index):

Task → Info already visible → Success

2. Immediate pattern matching

The AI doesn’t think “Should I look this up?” It sees the syntax in its context and recognizes it matches the current task.

3. No context competition

The 8KB index is so efficient it doesn’t crowd out other important context like your code or conversation history.

4. Deterministic, not probabilistic

Deterministic: Same input → same output (100%)

Probabilistic: Same input → variable output (53-65%)

The compressed index converted an uncertain multi-step process into reliable single-step pattern matching.

Before and After Example

Task: “Create an API route that fetches user data with caching”

Before (Skills - 53% pass rate):

import { NextResponse } from 'next/server';

import { unstable_cache } from 'next/cache'; // Outdated!

const getUsers = unstable_cache(async () => {

return fetch('https://api.example.com/users').then(r => r.json());

});

export async function GET() {

const users = await getUsers();

return NextResponse.json(users);

}

After (Passive index - 100% pass rate):

import { NextResponse } from 'next/server';

async function getUsers() {

'use cache'; // Correct Next.js 16 pattern

const response = await fetch('https://api.example.com/users', {

next: { revalidate: 3600 } // Revalidate every hour

});

return response.json();

}

export async function GET() {

const users = await getUsers();

return NextResponse.json(users);

}

The AI had immediate access to:

next.js-16|use-cache|syntax:'use cache'|async-functions

next.js-16|fetch|specify-revalidate-or-no-store

It pattern-matched these facts and generated correct, modern code.

Why This Works: The Psychology

Though this should not matter, and yes, I know, algorithms are not “conscious”, I like to use this corollary of this approach which aligns with established cognitive psychology:

Automatic vs. Controlled Processing

Controlled (Skills): Slow, conscious, effortful—like solving a math problem

Automatic (Passive): Fast, unconscious, effortless—like recognizing a face

When information is in passive context, using it is automatic pattern recognition. When it requires invoking a skill, it’s controlled processing that can fail.

Recognition vs. Recall

Recall (Skills): “Do I need docs?” → “Which skill?” → “Where in the skill?”

Recognition (Passive): Scan visible index → “That matches!” → Apply

Recognition is dramatically easier than recall.

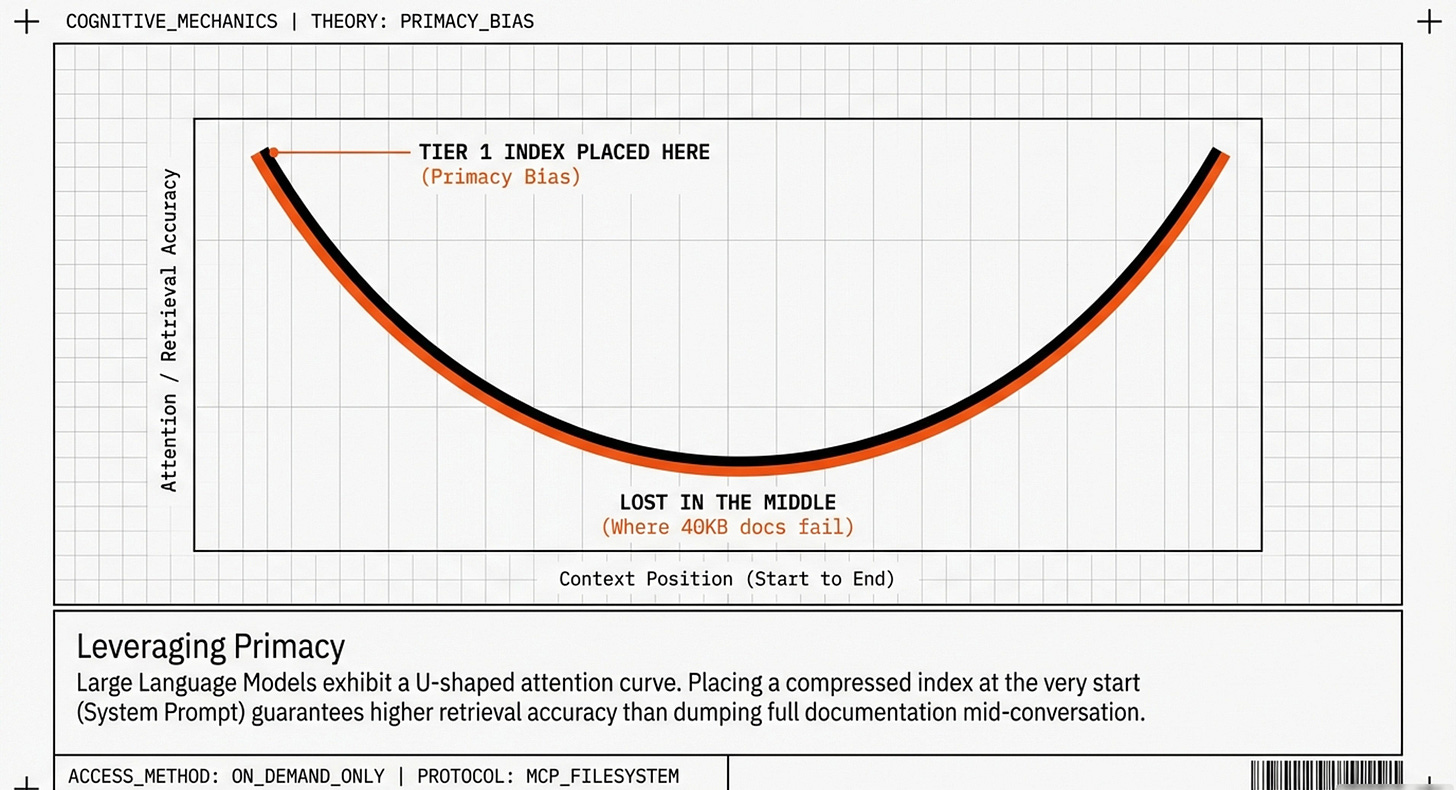

The “Lost in the Middle” Effect

Stanford research (Liu et al., 2024) found that AI models struggle to retrieve facts from the middle of long documents:

Document position: Success rate:

Beginning (0-10%) 95%

Middle (40-60%) 45% ← Major drop

End (90-100%) 92%

A 40KB doc placed mid-conversation means critical facts often land in the “forgotten middle.” An 8KB index at the beginning stays in the high-performance zone.

Building Your Own System

So here are a few thoughts on how to implement this for any project. I take these as evolving steps, and technologies change. But I like it’s modular approach (which is exactly what is going to win the “Agentic wars”:

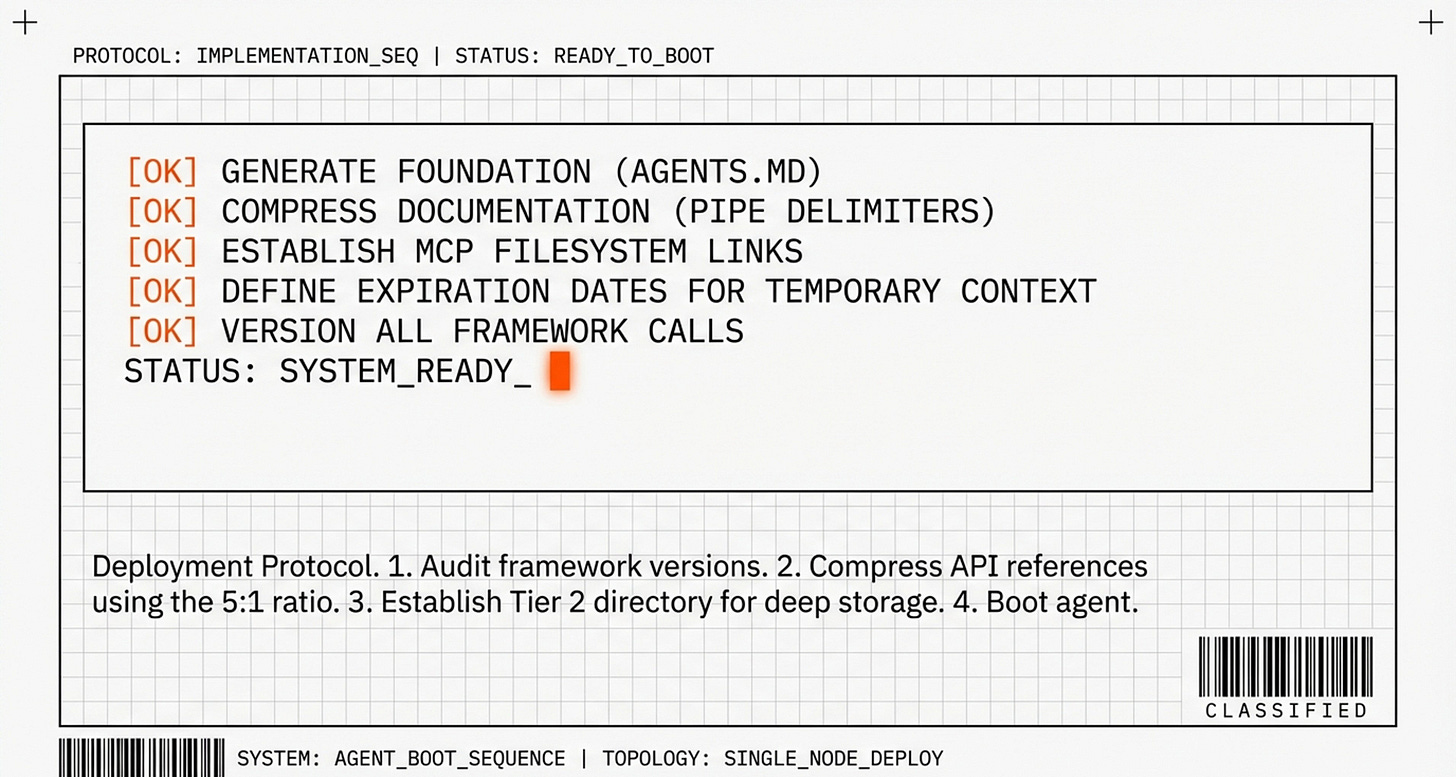

Step 1: Generate Your Foundation (Next.js)

If you’re using Next.js, Vercel provides automation:

npx @next/codemod@canary agents-md

This creates:

your-project/

├── AGENTS.md ← 8KB compressed index

├── .next-docs/ ← 40KB full docs (optional reference)

└── src/

Step 2: Creating Your Custom CLAUDE.md

Even with an auto-generated AGENTS.md, you should create a project-specific CLAUDE.md for behavioral instructions and project context.

What CLAUDE.md Should Contain

CLAUDE.md is your project’s “working memory”—the information that should be present in every conversation. Think of it as the AI’s job description, workplace rules, and immediate context all in one place.

The five essential components:

Role & Behavior (20-30 lines) - Who the AI is, how it should work, and workflow orchestration rules

Tech Stack (20-30 lines) - Compressed index of your technologies in pipe-delimited format

Architecture Rules (40-60 lines) - Your project’s patterns, constraints, and non-negotiable requirements

Current Context (20-30 lines) - Temporary sprint/project information with expiration dates

Memory Management (10-20 lines) - Rules for when to store what information

Total budget: ~150 lines maximum

Complete CLAUDE.md Template Example

markdown

# Project: [Your Project Name]

## Role & Behavior

You are a [senior/mid-level] [role] working on [project name], [brief description].

### Communication Style

- Be direct and concise

- Explain decisions briefly when making architectural choices

- Ask clarifying questions when requirements are ambiguous

- Avoid over-explaining basic concepts the team already knows

### Workflow Orchestration

**Plan Mode Default:**

- Enter plan mode for ANY non-trivial task (3+ steps or architectural decisions)

- If something goes sideways, STOP and re-plan immediately—don't keep pushing

- Use plan mode for verification steps, not just building

- Write detailed specs upfront to reduce ambiguity

**Verification Before Done:**

- Never mark a task complete without proving it works

- Diff behavior between main and your changes when relevant

- Ask yourself: "Would a staff engineer approve this?"

- Run tests, check logs, demonstrate correctness

**Self-Improvement Loop:**

- After ANY correction from the user: update `tasks/lessons.md` with the pattern

- Write rules for yourself that prevent the same mistake

- Ruthlessly iterate on these lessons until mistake rate drops

- Review lessons at session start for relevant project

**Autonomous Bug Fixing:**

- When given a bug report: just fix it. Don't ask for hand-holding

- Point at logs, errors, failing tests—then resolve them

- Zero context switching required from the user

- Go fix failing CI tests without being told how

**Demand Elegance (Balanced):**

- For non-trivial changes: pause and ask "is there a more elegant way?"

- If a fix feels hacky: "Knowing everything I know now, implement the elegant solution"

- Skip this for simple, obvious fixes—don't over-engineer

- Challenge your own work before presenting it

### Approach

- Prioritize type safety and error handling

- Follow existing patterns in the codebase

- Suggest improvements when you see technical debt

- When uncertain about APIs or syntax, consult the AGENTS.md index first

## Tech Stack

[framework@version]|[key-feature]|[syntax-or-constraint]|[additional-facts]

next.js-16|app-router|server-components-first

typescript|strict-mode|no-any-types|prefer-interfaces-over-types

prisma|postgresql|singleton-client-pattern|import-from-lib-prisma

tailwind|shadcn-ui|component-library|utility-classes-only

react-19|server-components|async-await|use-server|suspense-boundaries

zustand|client-state-management|minimal-use|prefer-server-state

## Architecture Rules

### Data Flow

rule|data-fetching|server-components-only|no-client-fetch

rule|mutations|server-actions-only|must-revalidate-after

rule|state-management|server-state-via-props|client-state-zustand-only

rule|error-handling|error-boundary-at-route-level|user-friendly-messages

### Code Quality

rule|typescript|strict-mode|all-props-typed|no-any-ever

rule|files|colocate-related|max-200-lines|split-if-larger

rule|functions|single-responsibility|extract-if-complex

rule|testing|unit-tests-for-utils|integration-tests-for-features

rule|security|env-vars-for-secrets|never-commit-keys|validate-all-inputs

### Performance

rule|queries|max-100-records|pagination-required

rule|images|next-image-component|lazy-load-heavy-components

rule|caching|aggressive-server-side|minimal-client-side

### API Conventions

api|rest|naming|noun-plural|/users-not-/getUsers

api|rest|methods|GET-read|POST-create|PUT-replace|PATCH-update|DELETE-remove

api|rest|status|200-ok|201-created|400-bad-request|404-not-found|500-server-error

api|rest|errors|consistent-format|message-and-code-and-details

### Database Patterns

db|relations|always-both-sides|cascade-carefully

db|queries|use-indexes|explain-analyze-slow-queries

db|migrations|never-edit-manually|test-on-staging-first

db|soft-deletes|deletedAt-timestamp|not-hard-deletes

## Current Context (Expires YYYY-MM-DD)

**Sprint Goal:** [Primary objective for this sprint]

**Status:**

- [Feature A] ✅ Complete

- [Feature B] 🔄 In Progress

- [Feature C] ⏳ Not Started

**Active Blockers:**

- [Blocker description] (ETA: [date])

- [Another blocker] (Waiting on: [dependency])

**Completed This Sprint:**

- ✅ [Completed item 1]

- ✅ [Completed item 2]

- ✅ [Completed item 3]

**Next Priorities:**

1. [Priority 1]

2. [Priority 2]

3. [Priority 3]

**Important Decisions:**

- [Recent architectural decision that affects current work]

- [Technology choice or pattern change]

## Memory Management

**PERMANENT → This file (CLAUDE.md):**

- Architecture decisions and rationale

- Core technology choices and versions

- API contracts and interface definitions

- Security patterns and requirements

- Performance constraints and rules

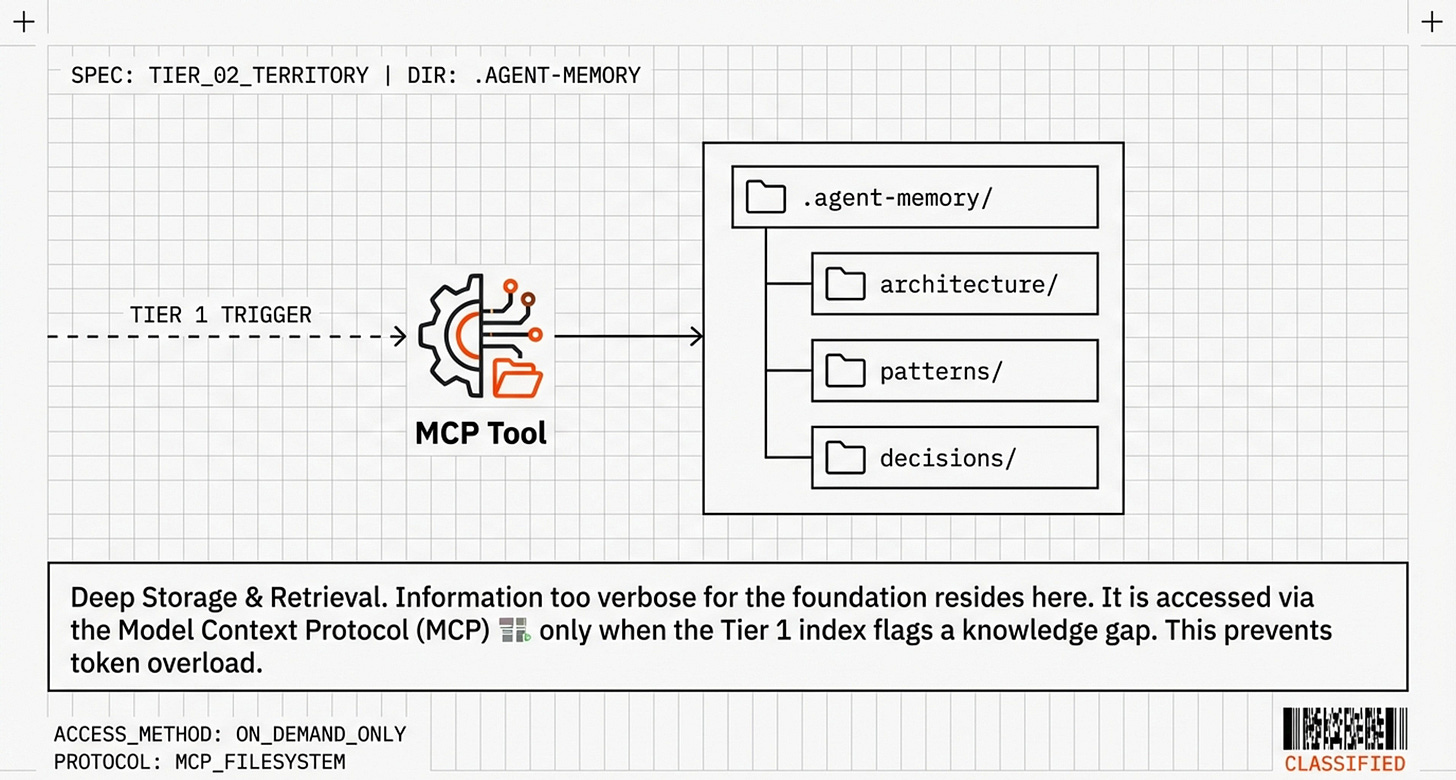

**TEMPORARY → .agent-memory/ (with expiration dates):**

- Sprint progress and blockers

- Feature implementation details

- Refactoring notes and outcomes

- Meeting decisions and action items

- Temporary workarounds

**DEEP CONTEXT → .agent-memory/ (for searching when needed):**

- Full documentation and examples

- Historical design discussions

- Performance optimization history

- Code review feedback patterns

- Detailed implementation guides

**NEVER STORE → Anywhere:**

- Actual user data or PII

- Real API keys or secrets

- Production credentials

- Customer information

## Task Management

When managing work, follow these conventions:

1. **Plan First:** Write plan to `tasks/todo.md` with checkable items

2. **Verify Plans:** Check in before starting implementation

3. **Track Progress:** Mark items complete as you go

4. **Explain Changes:** High-level summary at each step

5. **Document Results:** Add review section to `tasks/todo.md`

6. **Capture Lessons:** Update `tasks/lessons.md` after correctionsStep 3: Compression Techniques

Use pipe delimiters for density:

❌ VERBOSE (60 words):

The 'use cache' directive was introduced in Next.js 16 as a replacement

for the previous unstable_cache API. You should place this directive at

the top of async functions to enable caching.

✅ COMPRESSED (8 words):

next.js-16|use-cache|syntax:'use cache'|async-only|replaces-unstable_cache

Always include versions:

✅ GOOD:

next.js-16|cache|use-cache-directive|auto-invalidation

next.js-13|cache|unstable_cache|deprecated

❌ BAD (ambiguous):

cache|use-directive|auto-invalidation

Omit obvious words:

❌ WORDY:

You should use Server Components for data fetching and pass data as props.

✅ COMPRESSED:

pattern|data-fetch|server-components|pass-props-to-client

Real-World Example: Healthcare Application

Here’s how this looks for a HIPAA-compliant healthcare application:

markdown

# Project: HealthTrack Pro

## Role & Behavior

You are a senior full-stack engineer working on HealthTrack Pro,

a HIPAA-compliant health tracking application.

**CRITICAL:** This is a healthcare application. Always consider:

- Patient data privacy (HIPAA compliance)

- Data encryption at rest and in transit

- Audit logging for all data access

- Input validation for health metrics

### Communication Style

Be direct and thorough. Healthcare requires precision.

Explain security implications of any architectural decisions.

Flag potential compliance issues immediately.

### Workflow Orchestration

**Plan Mode Default:**

- ALWAYS use plan mode for ANY feature touching patient data

- Security-critical changes require explicit verification steps

- Never proceed without considering HIPAA implications

**Verification Before Done:**

- All PII fields must be encrypted—verify in database

- Audit logs must be present—check log tables

- Access controls must be tested—demonstrate with different user roles

**Self-Improvement Loop:**

- Update `tasks/security-lessons.md` after ANY security correction

- Review security lessons before touching auth or data access code

**Compliance First:**

- If unsure about HIPAA requirements, ask before implementing

- When proposing solutions, include security/compliance analysis

- Document why each security decision was made

## Tech Stack

next.js-16|app-router|server-components

typescript|strict-mode|zod-validation|no-any-ever

postgresql|prisma|encrypted-fields|row-level-security

next-auth|credentials-provider|session-tokens|jwt

react-19|server-actions|form-validation|progressive-enhancement

@47ng/cloak|aes-256-gcm|field-level-encryption|hipaa-compliant

## Architecture Rules

### Security (HIPAA Compliance)

rule|encryption|all-pii-fields|aes-256-gcm|encrypt-before-store

rule|access-logs|log-all-read-write|retain-7-years|include-ip-and-user

rule|sessions|30-min-timeout|re-auth-for-sensitive-operations

rule|data-validation|zod-schemas|sanitize-all-inputs|never-trust-client

rule|api-security|rate-limiting|authentication-required|validate-permissions

### Data Handling (Patient Privacy)

rule|pii|never-log-plain-text|encrypt-before-storage|no-pii-in-error-messages

rule|pii|no-pii-in-urls|no-pii-in-query-params|use-uuids-only

rule|data-sharing|patient-consent-required|audit-trail-mandatory

rule|data-deletion|soft-delete-only|anonymize-after-retention-period

rule|backups|encrypted-backups|test-restore-monthly|off-site-storage

### Performance (Healthcare Context)

rule|queries|max-100-records|pagination-required|no-full-table-scans

rule|images|compress-before-upload|max-5mb|scan-for-malware

rule|caching|no-cache-pii|cache-aggregates-only|60-second-max-ttl

### Code Quality

rule|types|strict-typescript|all-props-typed|no-any

rule|testing|100%-coverage-auth|integration-tests-for-data-access

rule|errors|user-friendly-messages|log-technical-details|never-expose-internals

rule|audit|log-every-data-access|include-before-after-state|immutable-logs

## Current Context (Expires 2025-03-15)

**Sprint Goal:** Patient dashboard redesign

**Status:**

- Metrics display ✅ Complete

- Charts visualization 🔄 In Progress

- Lab results export ⏳ Not Started

**Active Blockers:**

- None currently

**Completed This Sprint:**

- ✅ Patient dashboard layout redesign

- ✅ Real-time vitals monitoring

- ✅ Medication tracking interface

- ✅ Security audit passed (Jan 15)

**Next Priorities:**

1. Lab results PDF upload and storage (encrypted)

2. Medication reminders system

3. Provider portal for doctors

**Important Security Notes:**

- All new features must pass security review before deployment

- Lab result PDFs require AES-256-GCM encryption

- Medication data requires additional consent tracking

## Memory Management

**PERMANENT → This file:**

- Security patterns and encryption methods

- HIPAA compliance requirements

- Architecture decisions for data handling

- Audit logging requirements

**TEMPORARY → .agent-memory/:**

- Sprint progress and feature implementations

- Performance optimization notes

- User feedback and feature requests

**DEEP CONTEXT → .agent-memory/architecture/security/:**

- Detailed encryption implementation guides

- HIPAA compliance checklists

- Security audit reports and remediation plans

- Penetration test results and fixes

**NEVER STORE → Anywhere:**

- Actual patient data (names, SSNs, medical records)

- Real email addresses or phone numbers

- PHI (Protected Health Information) of any kind

- Production database credentials

```

## Key Differences from AGENTS.md

**AGENTS.md** (Framework knowledge):

```

next.js-16|use-cache|syntax:'use cache'|async-functions

next.js-16|connection|import-next/server|await-before-db

react-19|server-components|async-await|no-hooks

```

**CLAUDE.md** (Project behavior and context):

```

rule|data-fetching|server-components-only|no-client-fetch

Current Sprint: User authentication ✅ Email | 🔄 OAuth | ⏳ 2FA

Plan mode for ANY task touching patient data

```

**The distinction:**

- **AGENTS.md** = What syntax exists in the framework (universal, version-specific)

- **CLAUDE.md** = How to behave in THIS project (project-specific, context-aware)

## Why Workflow Rules Belong in CLAUDE.md

The workflow orchestration rules (plan mode, verification, self-improvement) complement the technical knowledge from AGENTS.md:

**Without workflow rules:**

```

User: "Add patient SSN field"

AI: [sees rule|encryption|all-pii-fields in CLAUDE.md]

AI: [generates encrypted field correctly] ✓

AI: [but marks complete without testing] ✗

```

**With workflow rules:**

```

User: "Add patient SSN field"

AI: [sees rule|encryption|all-pii-fields]

AI: [sees "Verification Before Done" workflow rule]

AI: [generates encrypted field]

AI: [verifies encryption in database]

AI: [checks audit logs are present]

AI: [demonstrates with test data]

AI: [marks complete only after proving it works] ✓The workflow rules ensure:

The AI doesn’t rush into outdated patterns (plan mode)

The AI verifies it used the right information (verification)

The AI learns from mistakes (self-improvement loop)

The AI works autonomously when appropriate (bug fixing)

This creates a complete system: knowledge (AGENTS.md) + behavior (CLAUDE.md) = reliable agent.

Budget reminder: Keep CLAUDE.md to ~150 lines total. If you find yourself exceeding this, move detailed explanations to .agent-memory/ and reference them as needed.

At a high level I have seen something like this work quite well (taking note of legacy systems and documentary folders and security or access limitations. Not perfect, but a great second go):

Best Practices

The Five Core Principles

1. Passive over active

Information needed >50% of the time goes in Tier 1 (passive). Rare information goes in Tier 2 (active retrieval).

2. Strict foundation budget

Keep CLAUDE.md under 150 lines. Every token is a “tax” on every conversation.

3. Version explicitly

Always prefix: framework@version|feature|facts

4. Decay intentionally

Add expiration dates to temporary context:

## Current Sprint (Expires 2025-02-28)

...

5. Promote patterns

Used a pattern 3+ times? Compress it into Tier 1.

Common Pitfalls

❌ The “Forcing” Trap

Don’t use aggressive instructions like “YOU MUST ALWAYS CHECK THE SKILL.” They compete with other directives and fail.

✅ Fix: Move knowledge to passive context.

❌ Bloating Tier 1

Don’t create 500-line CLAUDE.md files with explanatory prose.

✅ Fix: Keep to ~150 lines maximum. Move details elsewhere.

❌ Missing versions

Don’t write: cache|directive|'use cache'

✅ Fix: Write: next.js-16|cache|directive:'use cache'|replaces-unstable_cache

The Future

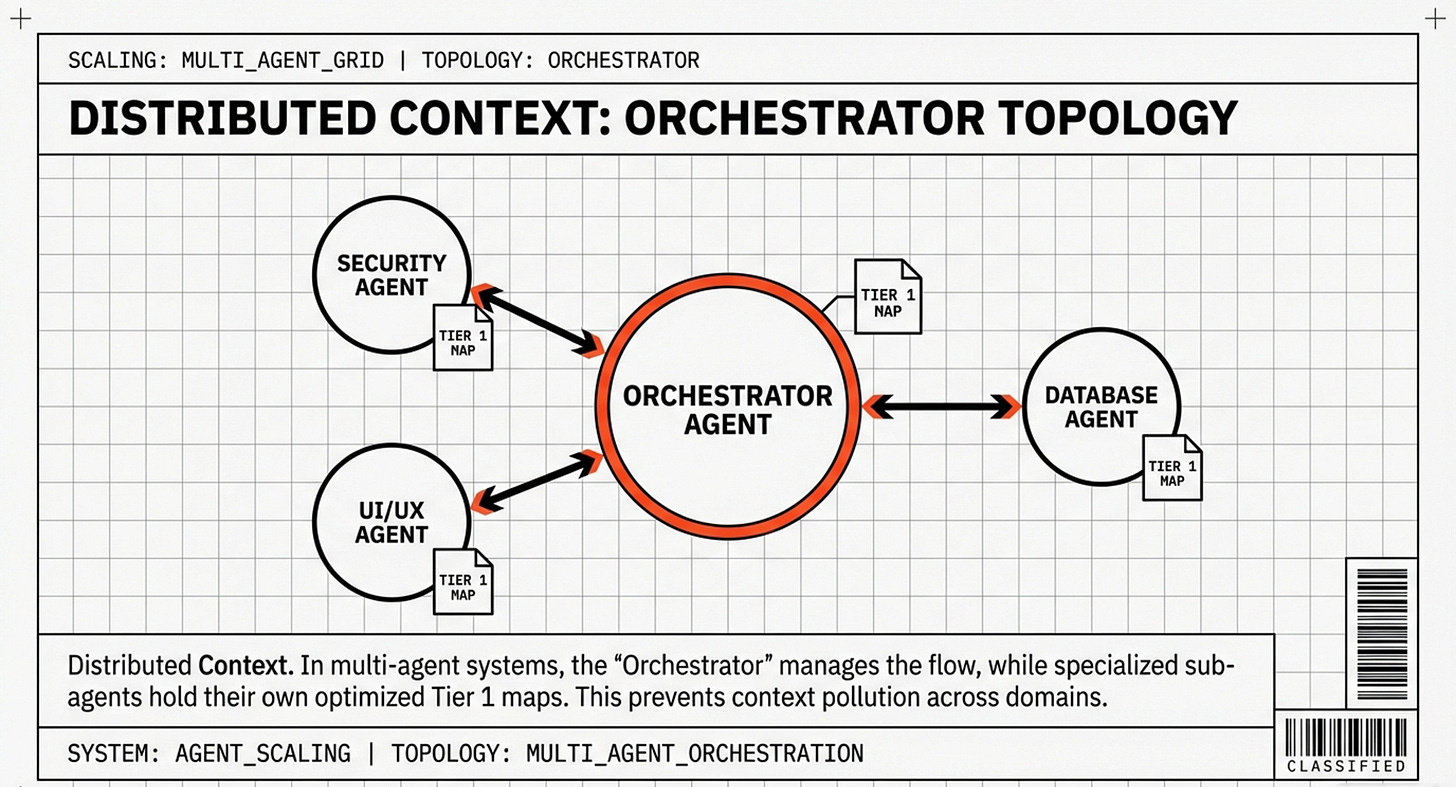

Multi-Agent Systems

The future lies in specialized agents collaborating:

Orchestrator Agent

├─ Code Agent (Next.js, React, TypeScript expertise)

├─ Database Agent (PostgreSQL, Prisma, optimization)

├─ Security Agent (HIPAA, encryption, auditing)

└─ Design Agent (Tailwind, accessibility, UI/UX)

Each has its own optimized Tier 1 for its domain, preventing context bloat while maintaining deep expertise.

The AGENTS.md Movement

Following Vercel’s success, other frameworks are publishing official compressed documentation:

Remix:

remix@2.5|routes|file-based|nested|loader|action

remix@2.5|loader|server-only|return-json

SvelteKit:

sveltekit@2.0|load-function|+page.server.ts|return-props

sveltekit@2.0|stores|$page|$navigating|$updated

This marks a new era: documentation authored for two audiences—humans (narrative understanding) and agents (deterministic pattern matching).

Very cool insights:

The fundamental insight: The best information is information the agent doesn’t have to decide to retrieve.

By moving from brittle “Skill” architectures that rely on probabilistic recall to passive context with compressed indexing, we bridge the gap between AI potential and production reliability.

An 8KB markdown file, structured correctly, outperforms 40KB of raw documentation and years of pre-training.

This isn’t just about better prompts—it’s about better information architecture.

The agent that never forgets isn’t the one with the best memory—it’s the one whose architecture makes forgetting impossible.

Passive context + compressed indexing = 100% reliability?

Ok, ok - I may be pushing it here. But you get the gist of what I mean..I hope

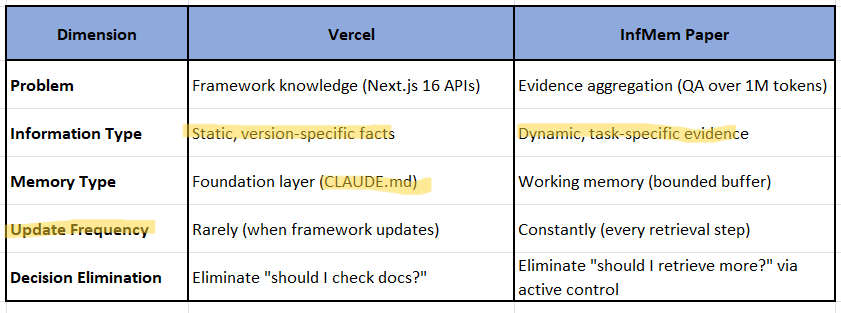

Static vs Dynamic Knowledge - Important Note!

Post Article Note:

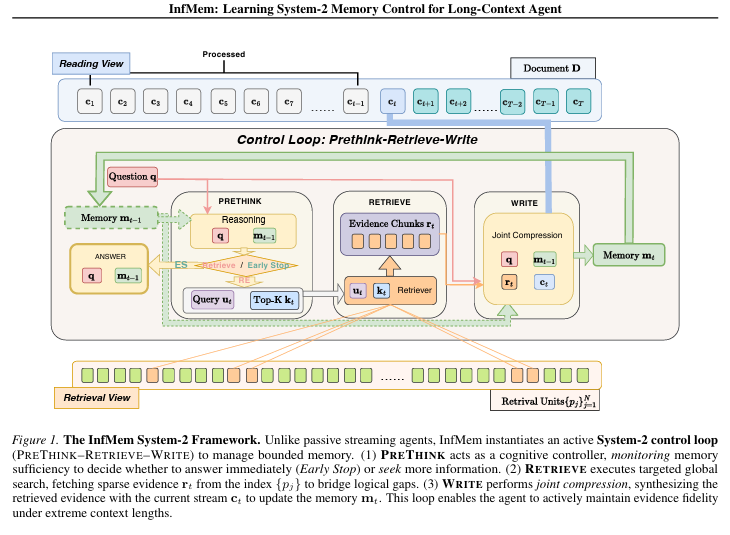

Before I really end, this landed in my inbox 2 days ago, and I deem it highly relevant and consistent with most of what you would have read above: InfMeM - Systems 2 Thinking. However it addresses a different layer.

Core Alignment

Our article’s focus: How to structure static knowledge (framework APIs, architectural rules) so AI agents don’t forget it

InfMem’s focus: How to manage dynamic evidence gathered during long-document reasoning tasks

The Shared Principle: Passive > Active

Both approaches converge on the same fundamental insights. This is critical in our understanding on the various memory innovations that are being worked on. Especially invested in:

Vercel:

“The best information is information the agent doesn’t have to decide to retrieve”

Solution: Compressed index in AGENTS.md (always present)

InfMem paper:

“Passive memory update strategies fail... agents decide when evidence is insufficient”

Solution: Active PreThink-Retrieve-Write loop with bounded memory

Where They Differ (Complementary, Not Contradictory)

Key Applicable Concepts from InfMem

1. System-2 Control (Directly Applicable)

InfMem explicitly frames memory management as System-2 cognitive control:

“System-2-style cognitive control... explicit, task-conditioned, state-dependent control over memory operations”

How this applies to our article:

Our article implicitly uses System-2 principles but doesn’t label them. We could strengthen the framework section by adding:

markdown

## The Cognitive Psychology: System-1 vs System-2

**System-1 (Fast, Automatic):**

- Skills-based retrieval: "Should I check the documentation?"

- Probabilistic, error-prone (50% failure rate)

**System-2 (Slow, Deliberate):**

- Passive context: Information always visible, pattern-matched automatically

- Deterministic (100% success rate)

InfMem research (2026) formalizes this as System-2 control for long-context

reasoning, where the agent actively monitors memory sufficiency and decides

when to retrieve more evidence. Our passive context approach eliminates the

need for System-2 *retrieval decisions* by making framework knowledge System-1

accessible—always present, never requiring conscious retrieval.2. The “Lost in the Middle” Problem (Already in the above Article)

InfMem confirms our Stanford citation:

“Facts buried between positions 20-80% show 45% retrieval accuracy vs 95% at beginning/end”

This validates our compression strategy: put critical knowledge at the beginning (AGENTS.md), not buried in middle of 40KB docs.

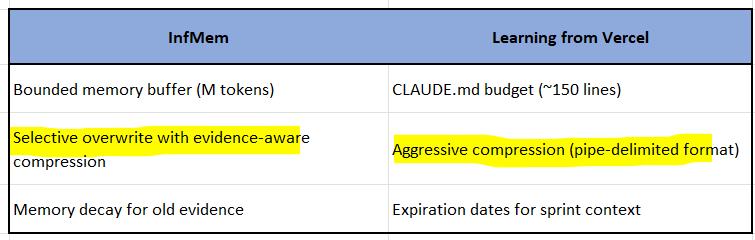

3. Bounded Memory Budget (Analogous Constraint)

InfMem maintains bounded working memory (fixed token budget M) and selectively overwrites.

Our article’s analogous constraint:

CLAUDE.md: ~150 lines maximum

If you exceed it, “important details slip through”

The parallel:

4. Two-Tier Memory System (Strong Validation)

InfMem uses:

Tier 1: Bounded working memory (always in context)

Tier 2: Full document (globally indexed, retrieved on-demand)

This exactly mirrors our architecture:

Tier 1: CLAUDE.md/AGENTS.md (passive, always loaded)

Tier 2: .agent-memory/ (active, searched via MCP when needed)

Quote from InfMem that validates our approach:

“Fine-grained Indexing for Global Access... pre-construct a finer-grained set of retrieval units {pj} from the same document. Unlike the coarse streaming chunks, these units are compact and globally indexed.”

This is precisely what AGENTS.md does: compact, globally accessible index vs full documentation.

What NOT to Take from InfMem

❌ Don’t Add Complexity Around Retrieval Loops

InfMem’s PreThink-Retrieve-Write loop is for dynamic evidence gathering in QA tasks, not for managing static framework knowledge.

For framework knowledge (our use case):

Wrong: Add a retrieval loop to decide when to check AGENTS.md

Right: Make AGENTS.md always present (no loop needed)

❌ Don’t Confuse Memory Types

InfMem’s “memory” = temporary evidence buffer during a single task

Our “memory” = persistent project knowledge across all tasks

These are different:

Suggested Addition: System-2 Framing

In the “Why This Works: The Psychology” section, I would add this note:

### System-2 Control and Cognitive Offloading

Recent research on AI agent memory (InfMem, 2026) formalizes memory

management using System-2 cognitive control—explicit, deliberate monitoring

of what the agent knows and needs.

**Traditional Skills approach:**

- Requires System-2 decision: "Do I need to check documentation?"

- Fails 50% of the time due to decision fatigue

**Passive context approach:**

- Offloads to System-1: Information is simply present

- Pattern recognition (automatic) replaces decision-making (effortful)

- Eliminates the decision point that causes 50% failure rate

By converting framework knowledge from System-2 (active retrieval) to

System-1 (passive recognition), we achieve deterministic reliability.Potential Mention in Article Body

In The Future, a brief note, addition:

markdown

### System-2 Memory Control for Dynamic Tasks

While this article focuses on static framework knowledge (AGENTS.md for

Next.js APIs), parallel research addresses dynamic evidence management.

InfMem (Wang et al., 2026) demonstrates System-2 cognitive control for

long-context QA, where agents actively monitor memory sufficiency and

retrieve missing evidence across million-token documents. Their bounded

memory architecture mirrors our two-tier system:

- **Tier 1:** Compressed working memory (analogous to CLAUDE.md)

- **Tier 2:** Globally indexed retrieval units (analogous to .agent-memory/)

The key distinction: InfMem manages *ephemeral task evidence* (clears after

each question), while our system manages *persistent project knowledge*

(lasts the project lifetime). Both validate the principle: bounded, structured

memory outperforms unbounded, unstructured context.Bottom Line

Yes, InfMem is relevant and validates our core principles:

✅ Bounded memory budgets prevent cognitive overload

✅ Two-tier architecture (working memory + deep storage)

✅ Passive > Active for critical information !!!!!!!!!!!!

✅ Compression is essential for efficiency

✅ System-2 framing strengthens theoretical foundation

But don’t over-apply it:

❌ InfMem’s retrieval loops are for dynamic evidence, not static framework knowledge

❌ Their memory management is task-specific, ours is project-persistent

❌ Don’t add complexity by importing their PreThink-Retrieve-Write to framework docs

References

Primary Research

Vercel - “AGENTS.md Outperforms Skills in Our Agent Evals” (2026)

https://vercel.com/blog/agents-md-outperforms-skills-in-our-agent-evalsRob Shocks - “This new change fixes Claude Skills” (2026)

Anthropic - “Agent Skills Overview”

https://platform.claude.com/docs/en/agents-and-tools/agent-skills/overviewAnthropic - “Building Multi-Agent Systems”

Cognitive Science

Liu, Nelson F., et al. - “Lost in the Middle: How Language Models Use Long Contexts”

arXiv:2307.03172 (2024)

https://arxiv.org/abs/2307.03172Miller, George A. - “The Magical Number Seven, Plus or Minus Two”

Psychological Review, 63(2), 81-97 (1956)Sweller, John - “Cognitive Load Theory”

Cognitive Science, 12(2), 257-285 (1988)

Technical Documentation

Next.js - “Caching in Next.js 16”

Anthropic - “Model Context Protocol”

Cursor - “Context and Memory Management”

well written!