Bridging Hardware-Aware Budgeting with Version-Controlled Contextual Reasoning

Agent(ic) Memory and Workflows

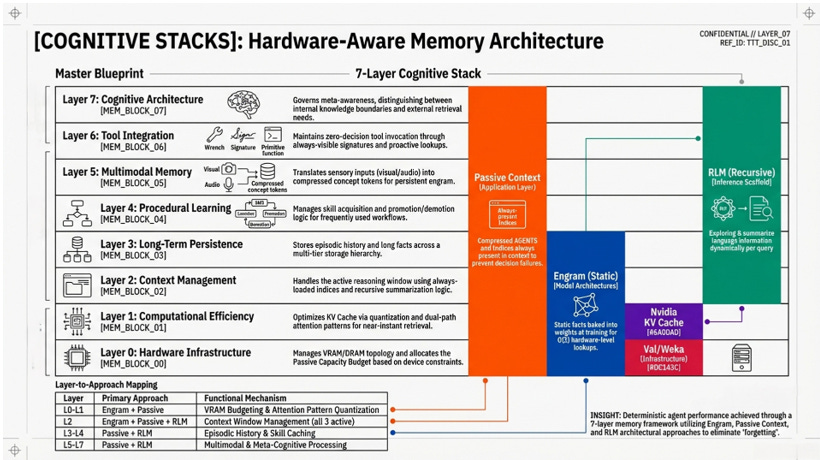

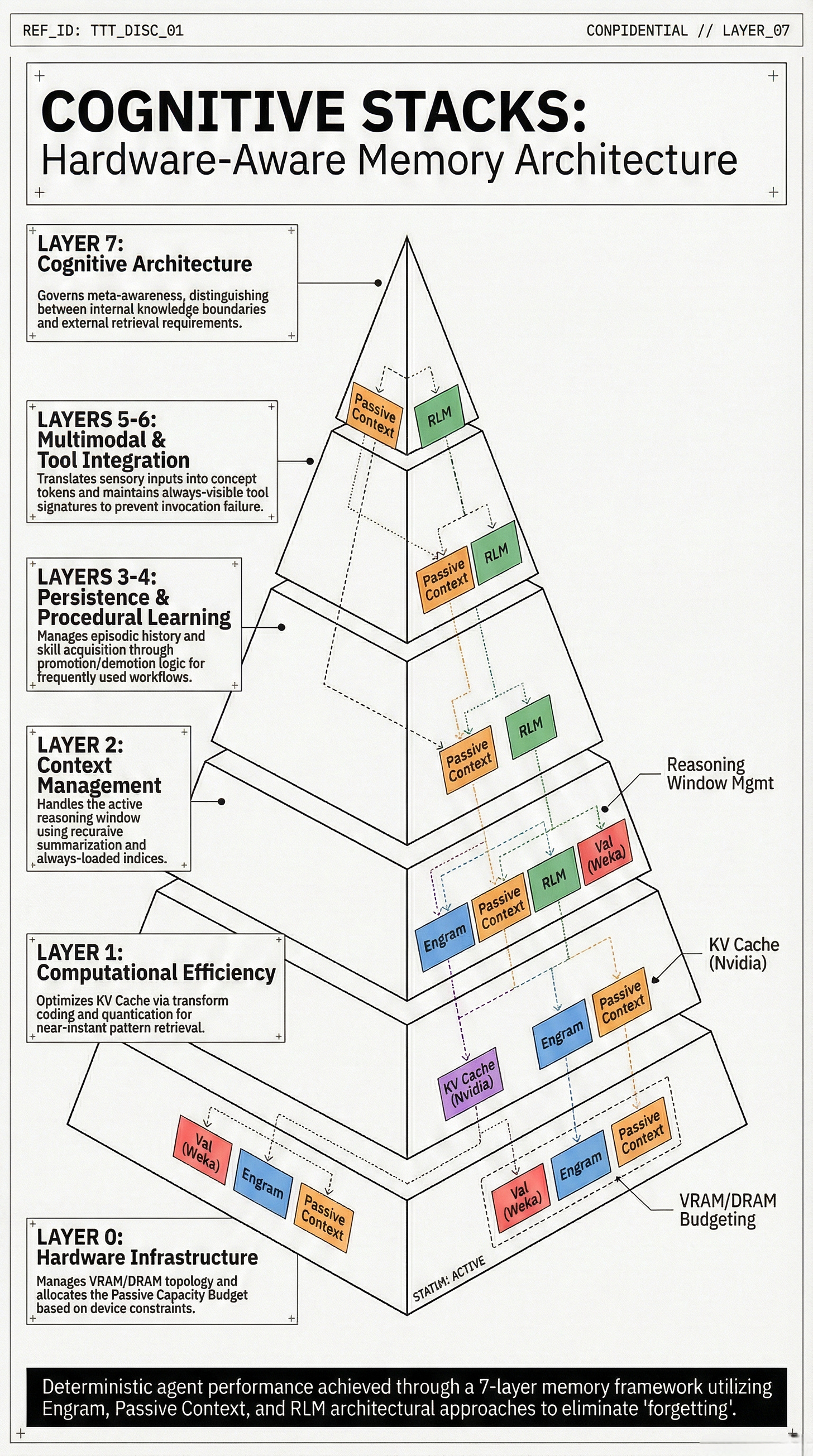

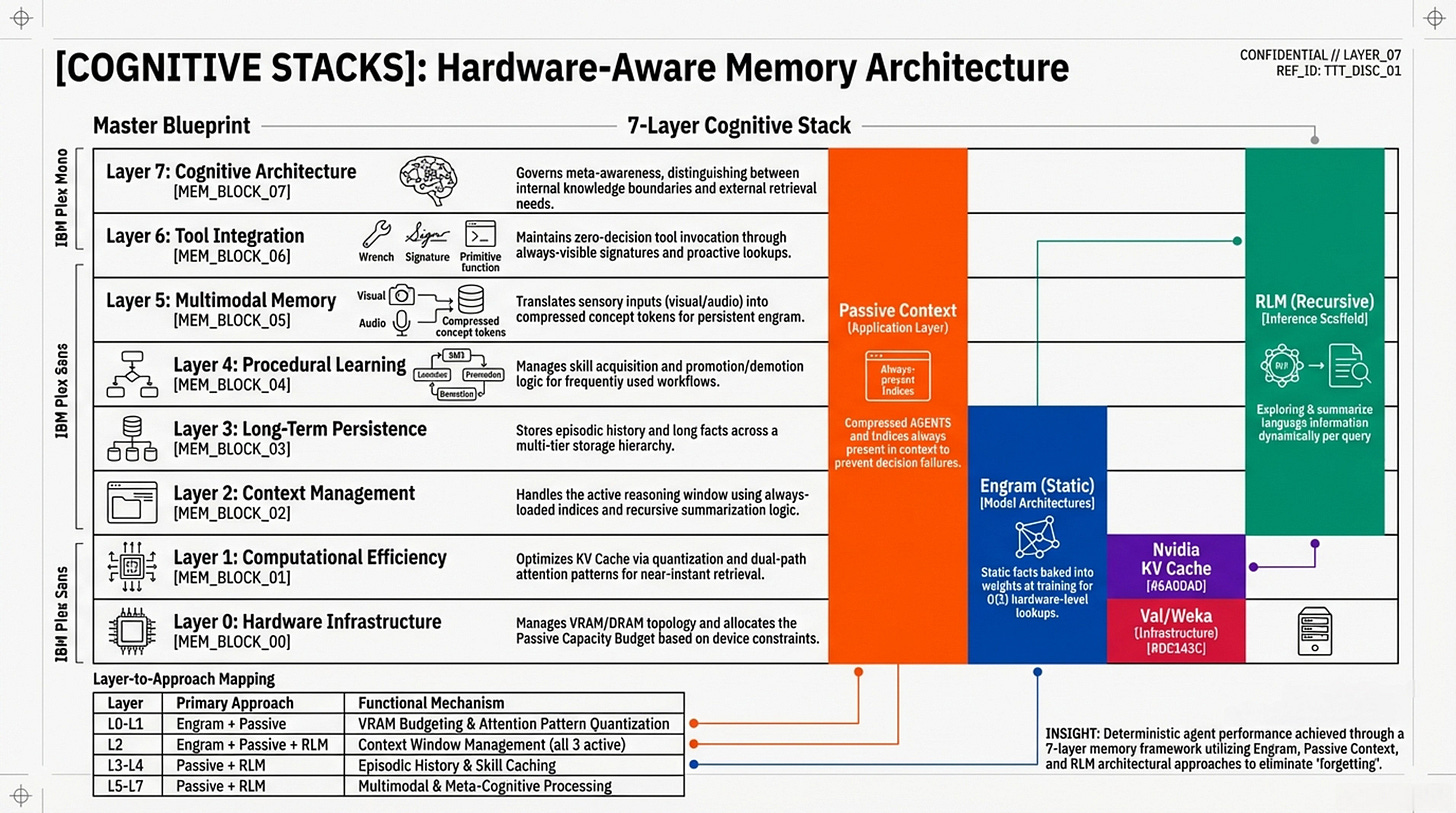

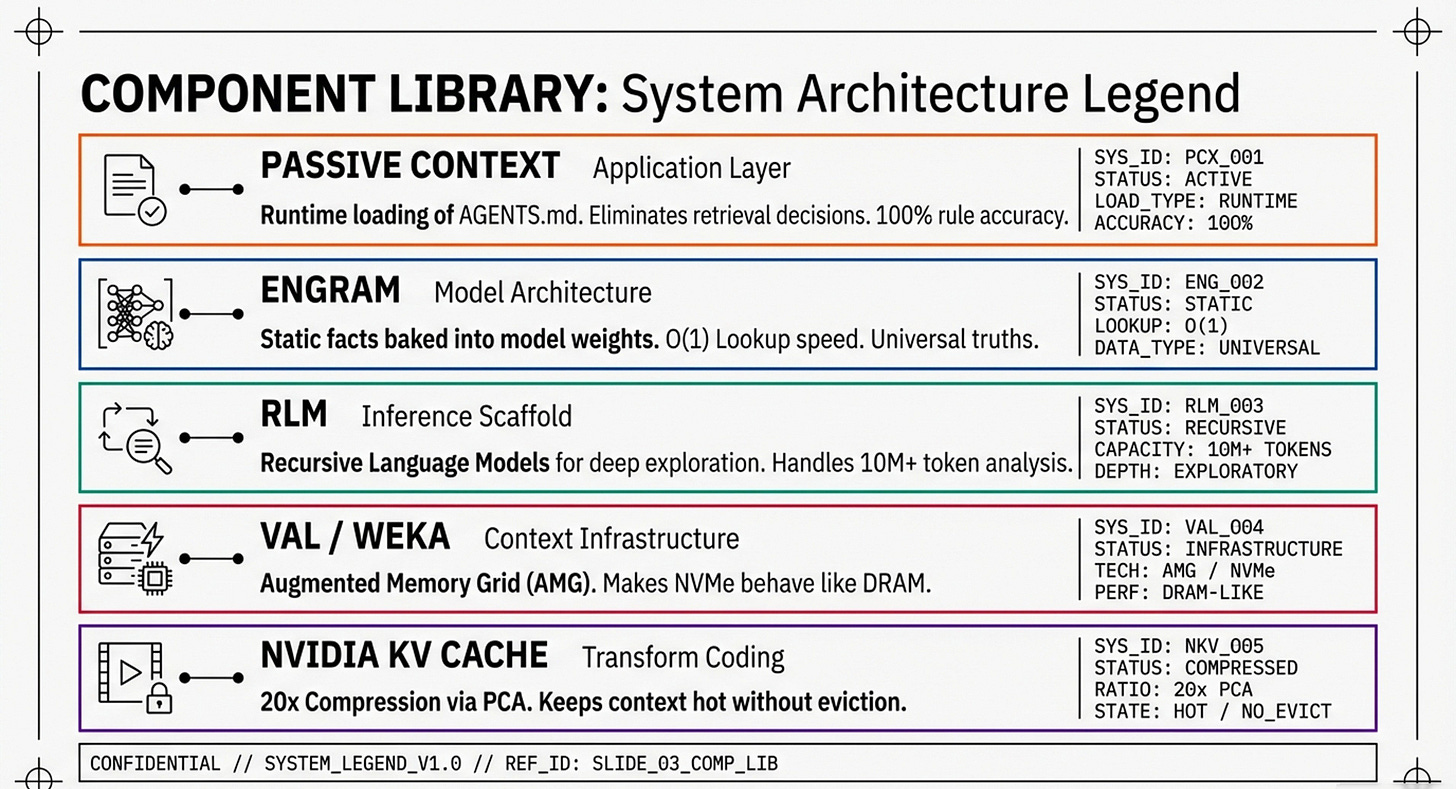

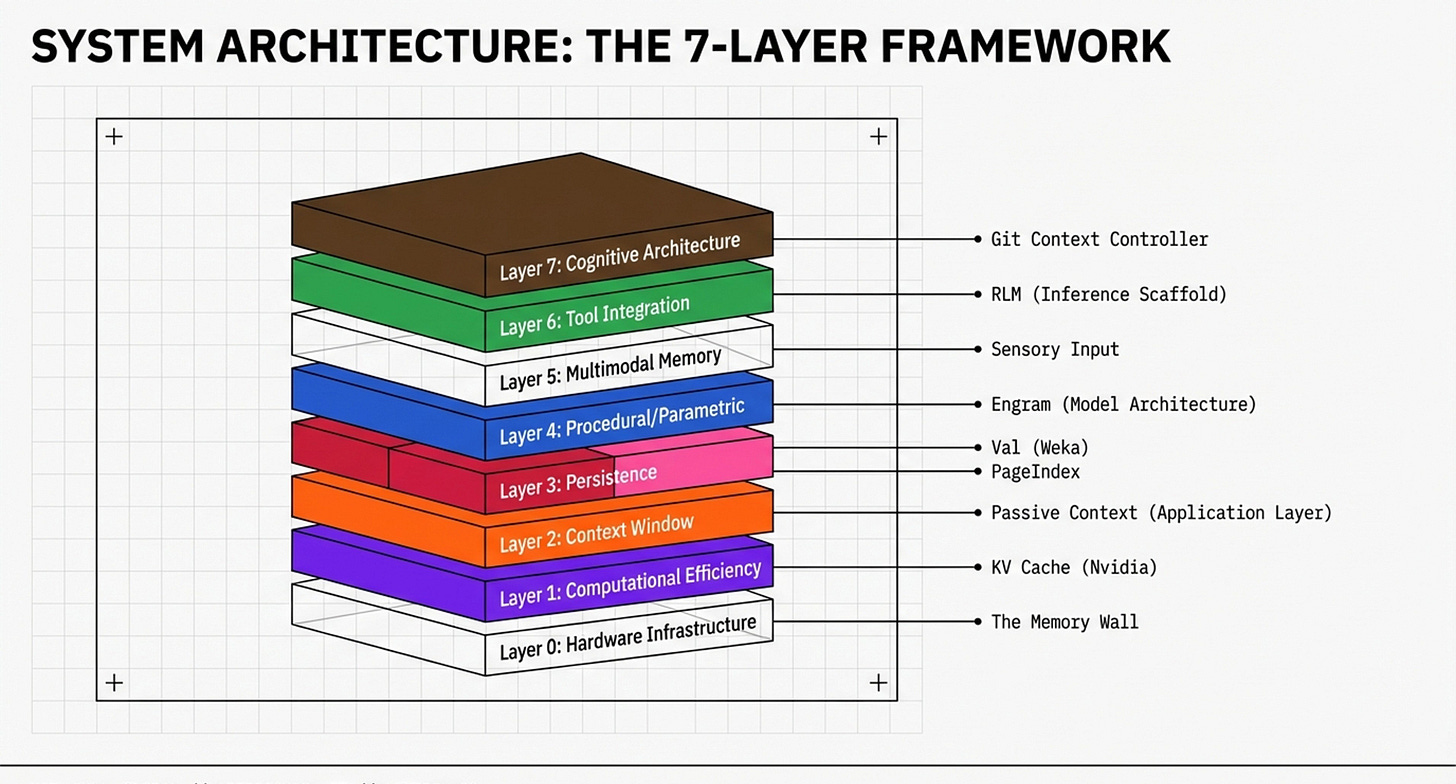

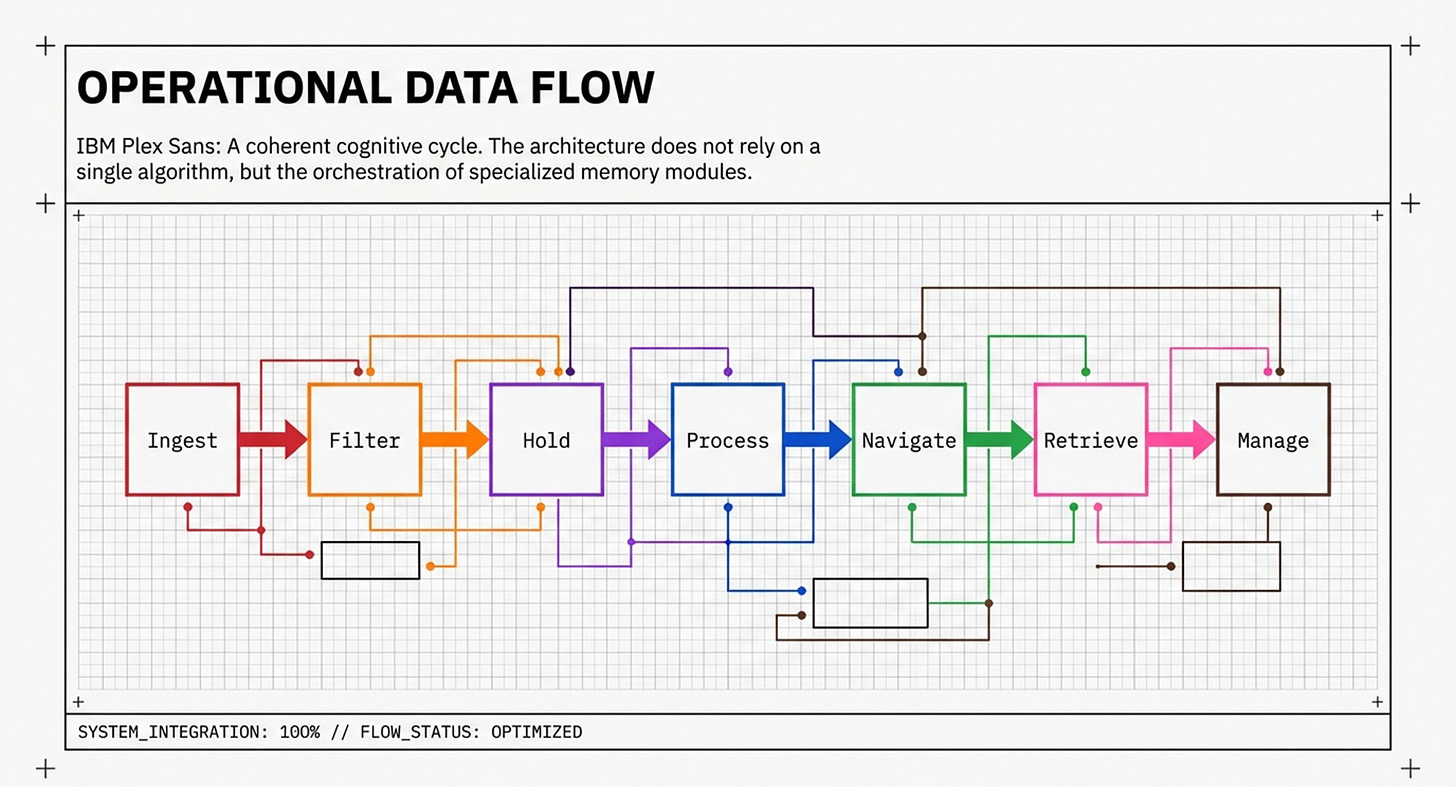

Continuing from The New Laws of Machine Memory, 2 condensed visualizations with slightly different perspectives, the industry has moved beyond "better prompts" to a Unified Master Blueprint.

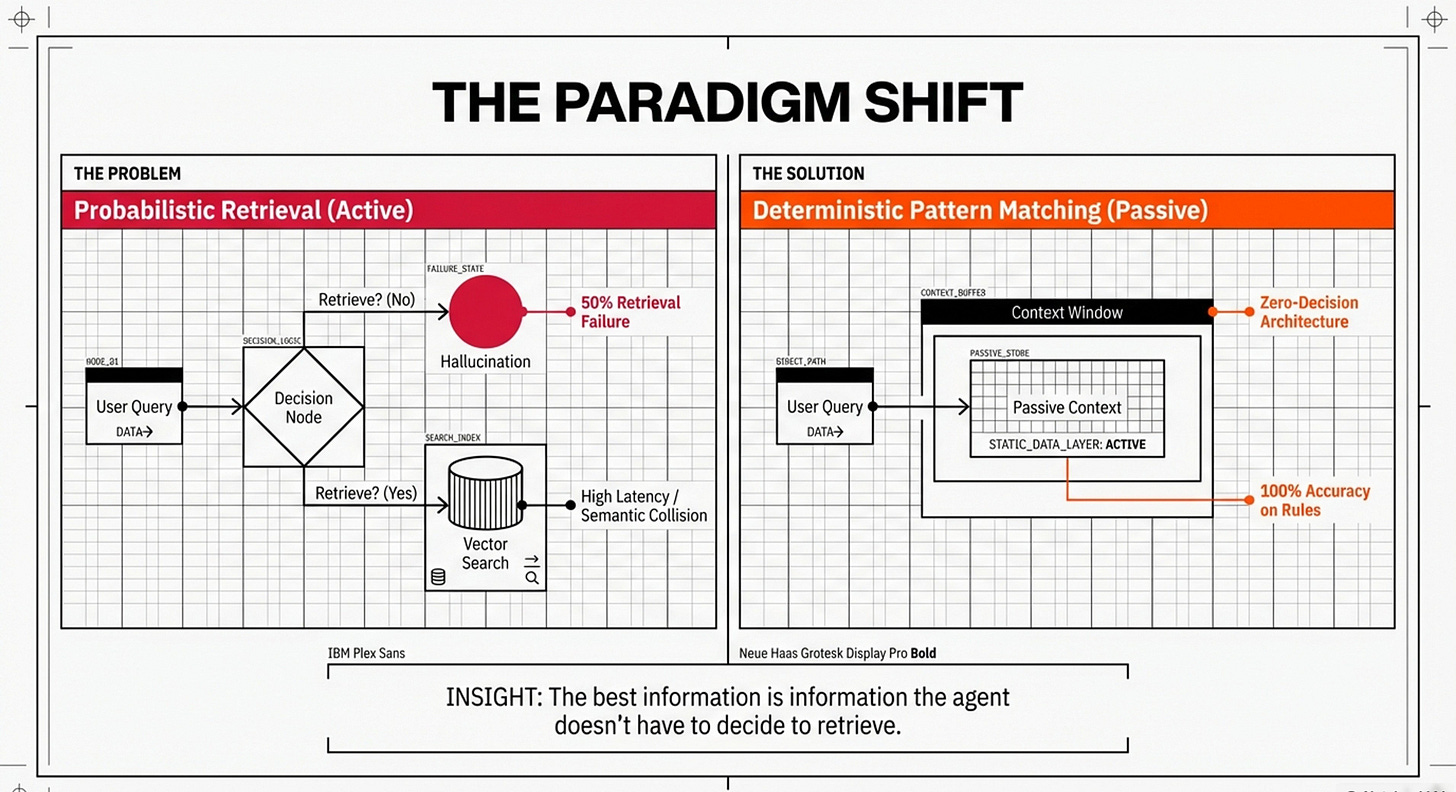

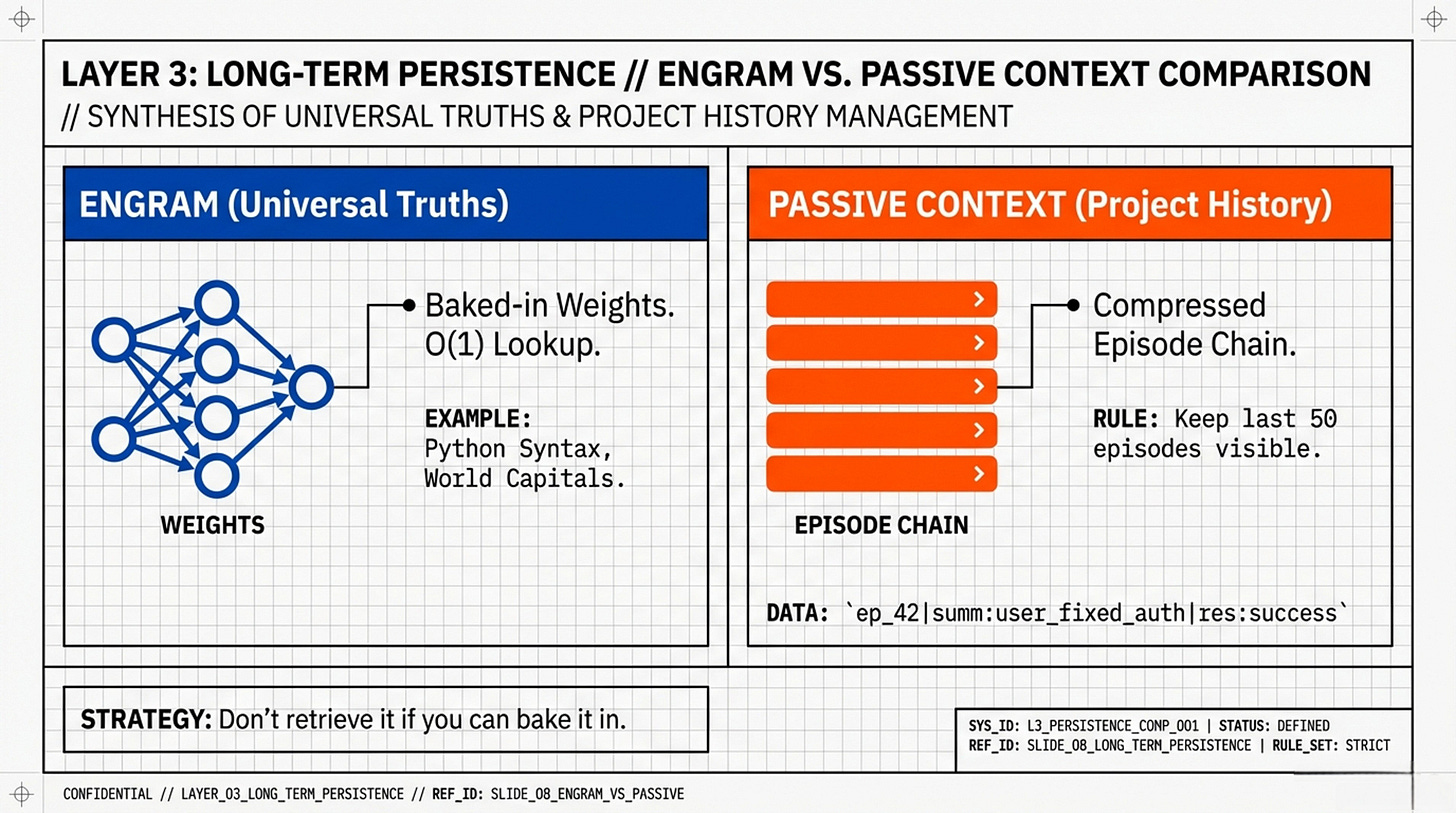

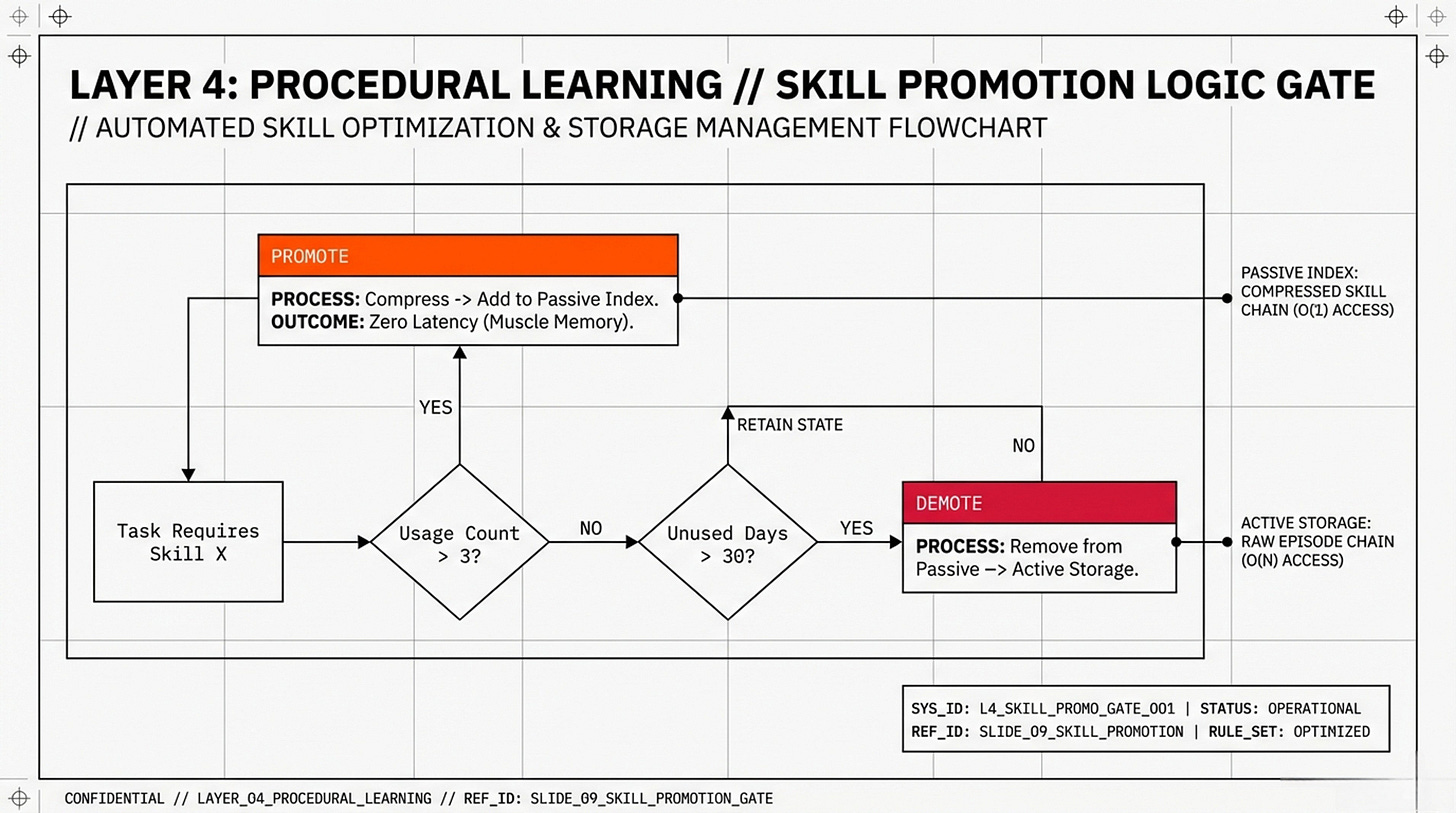

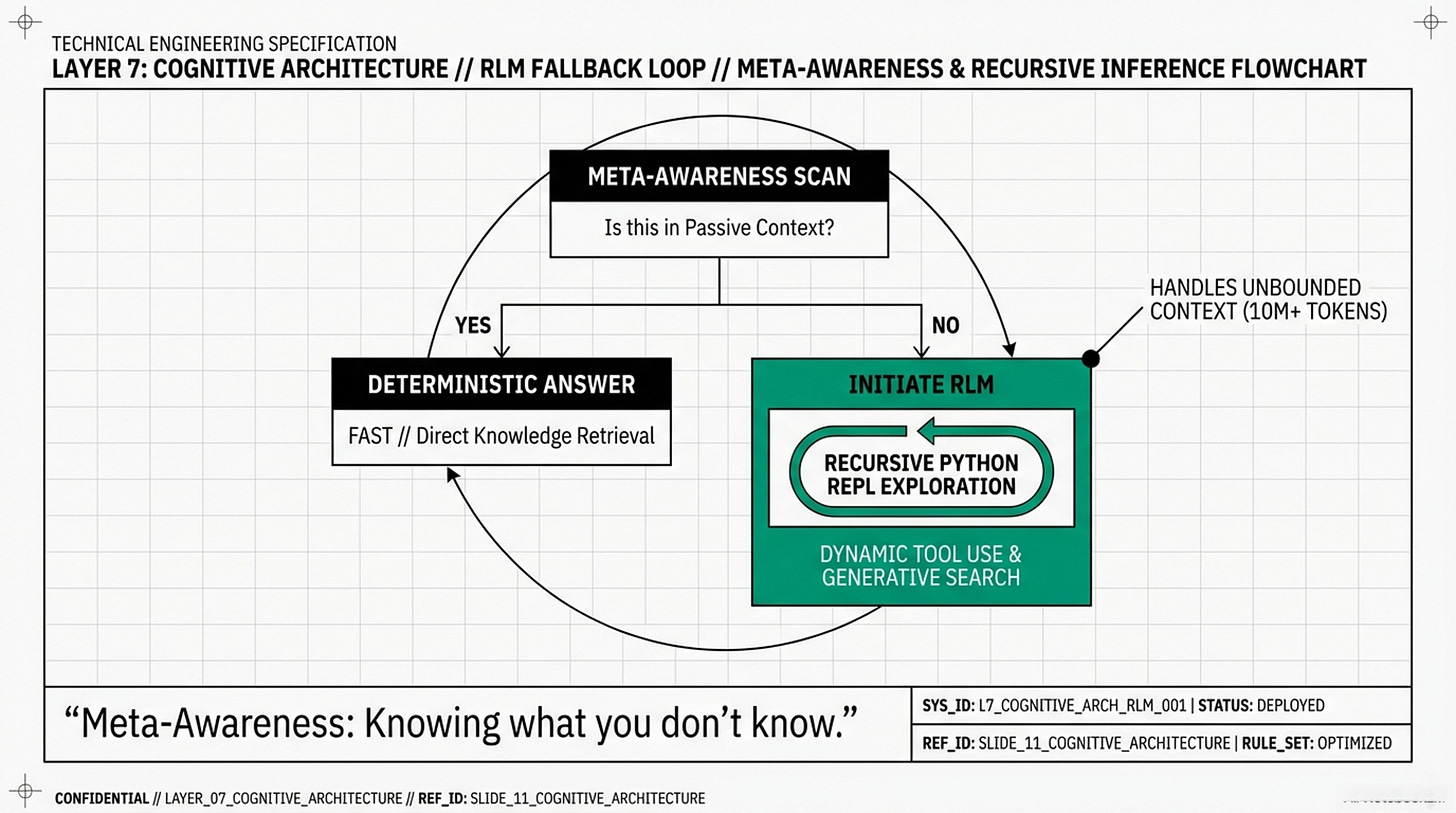

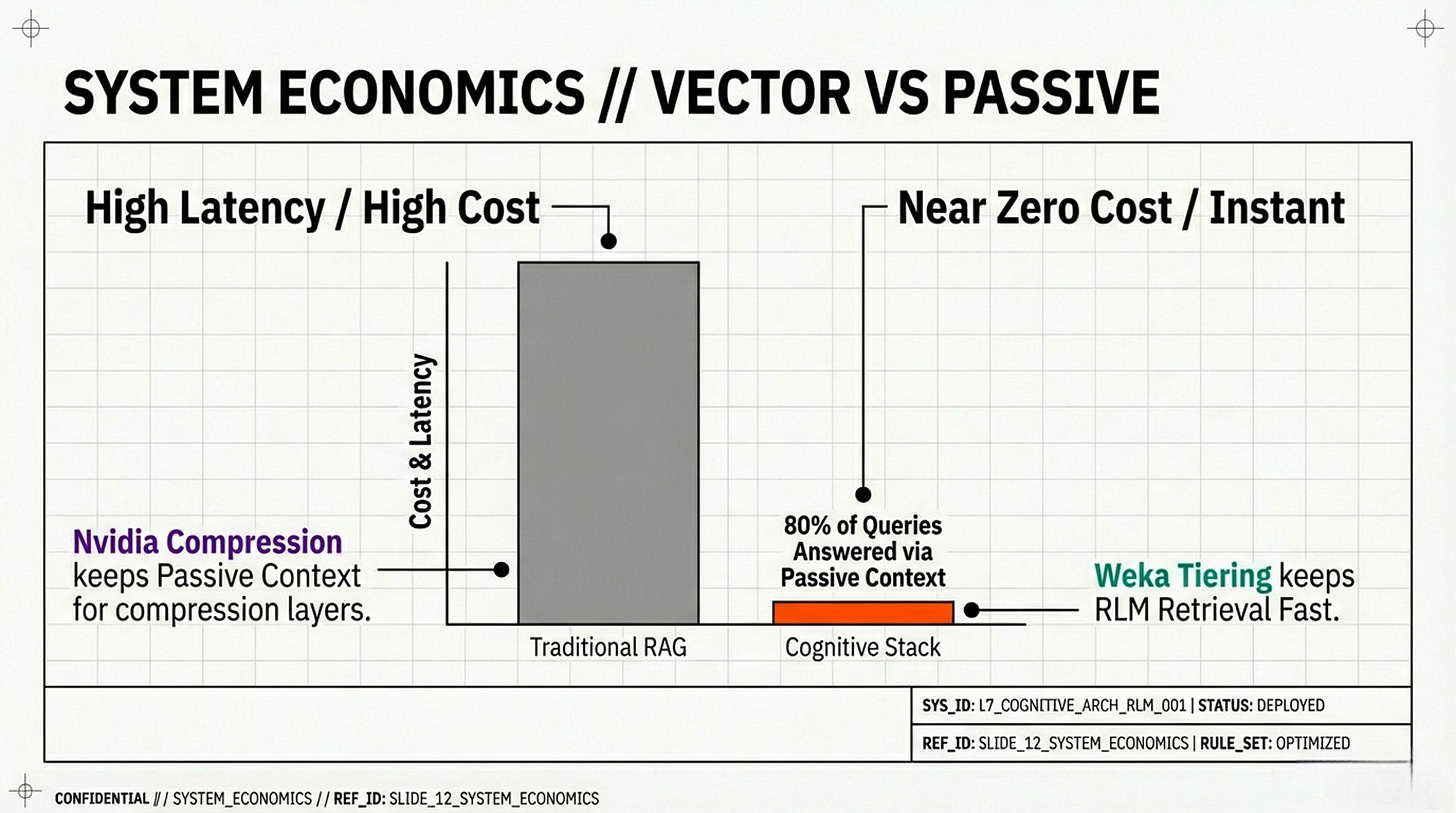

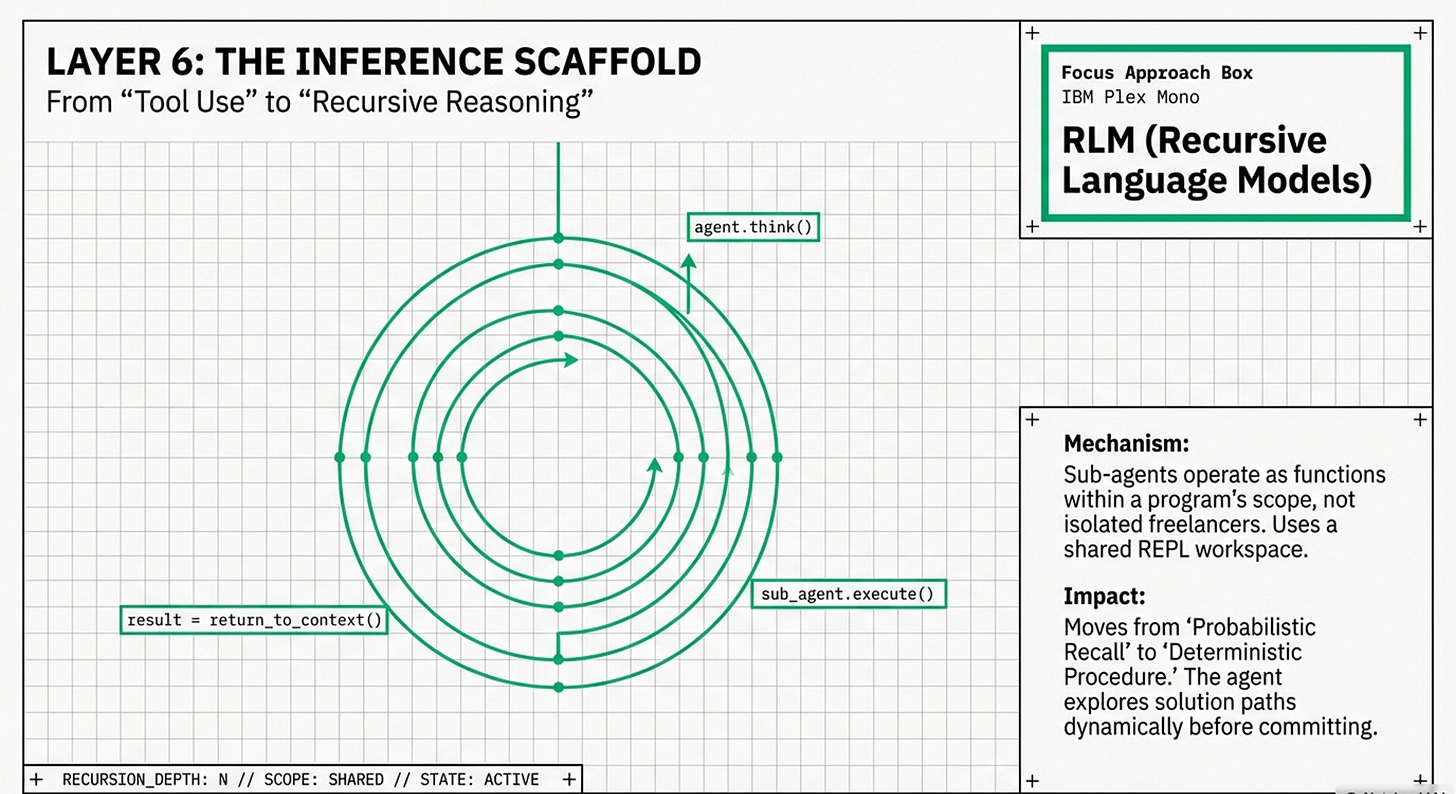

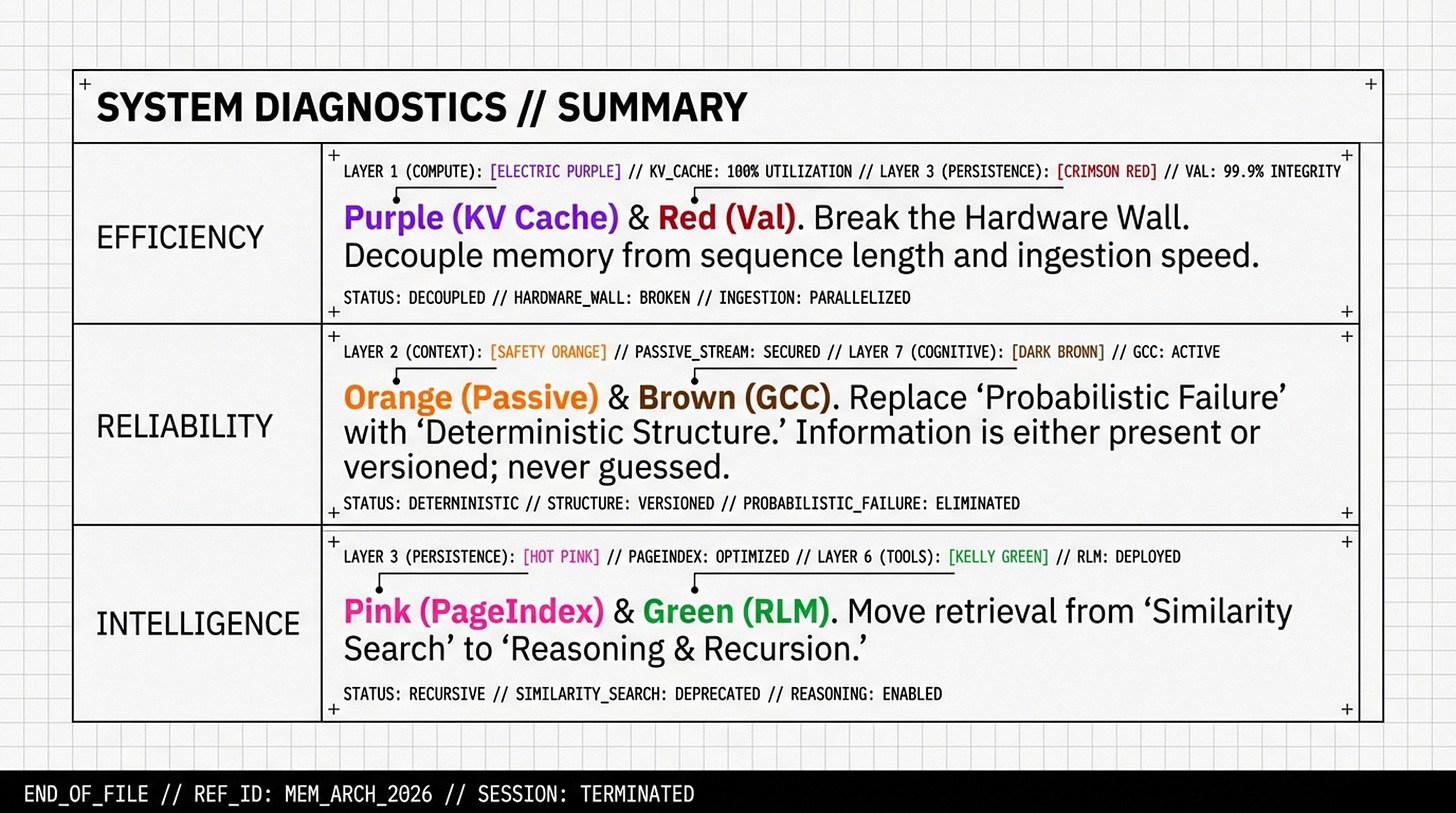

By unbundling memory into functional layers, we can better understand how to better “engineer agents” whose architecture "makes forgetting (almost) impossible". The data from Vercel (100% accuracy), PageIndex (98.7% accuracy), and GCC (SOTA benchmark scores) point towards deterministic architectural engineering as a functional reality. Various other memory optimizations like RLM reward elegant recursive code, over their modular Agentic cousins.

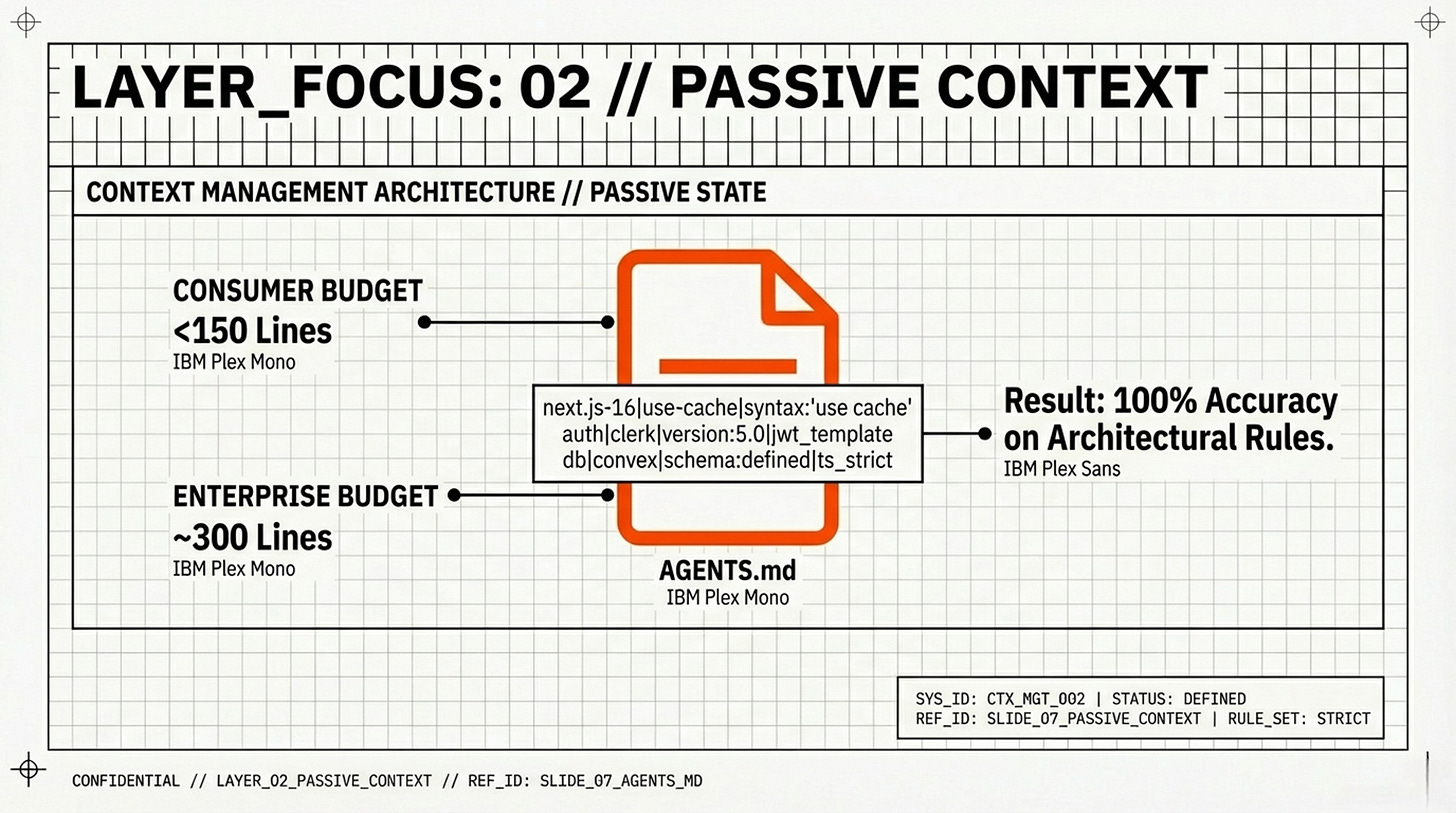

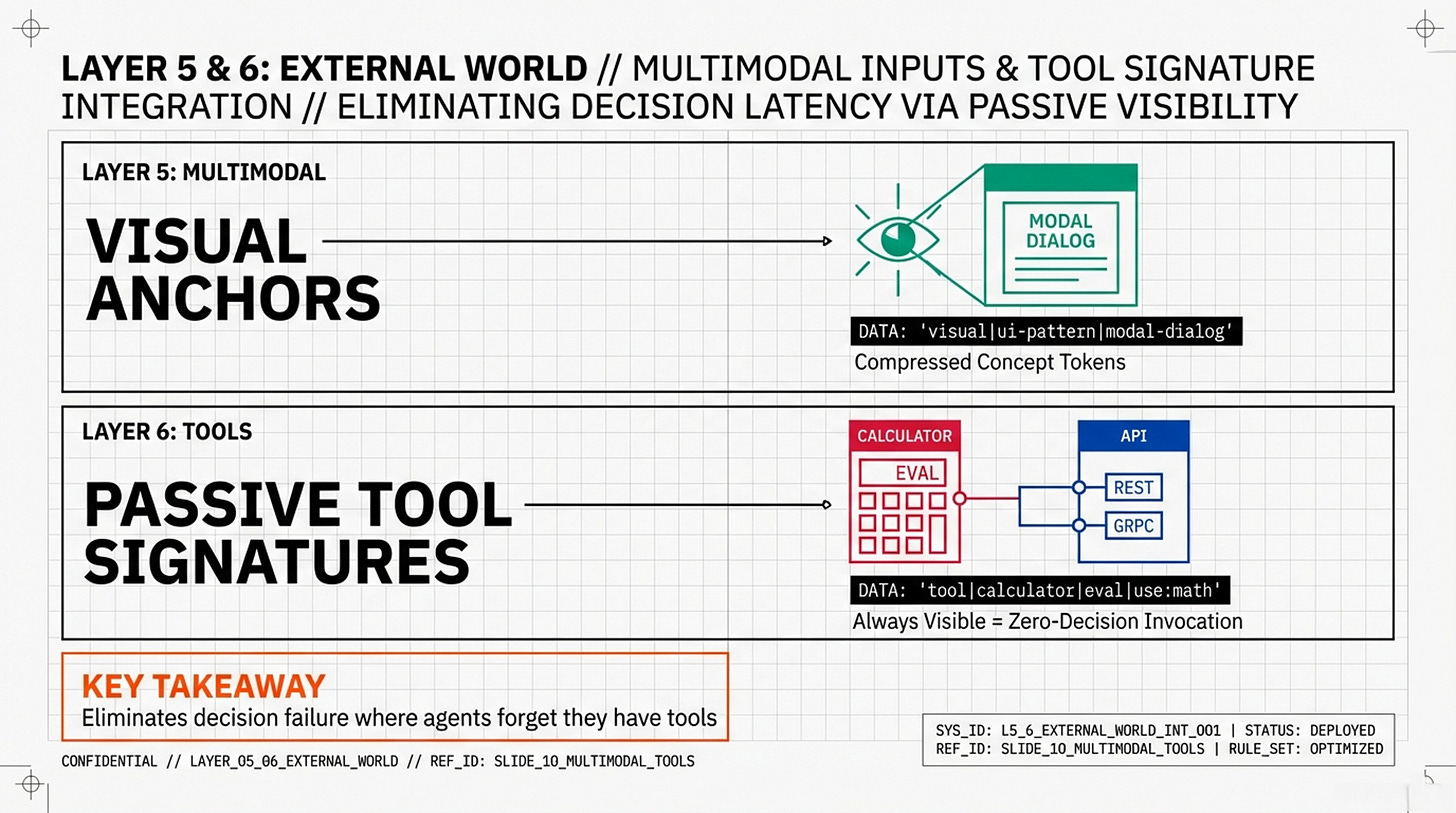

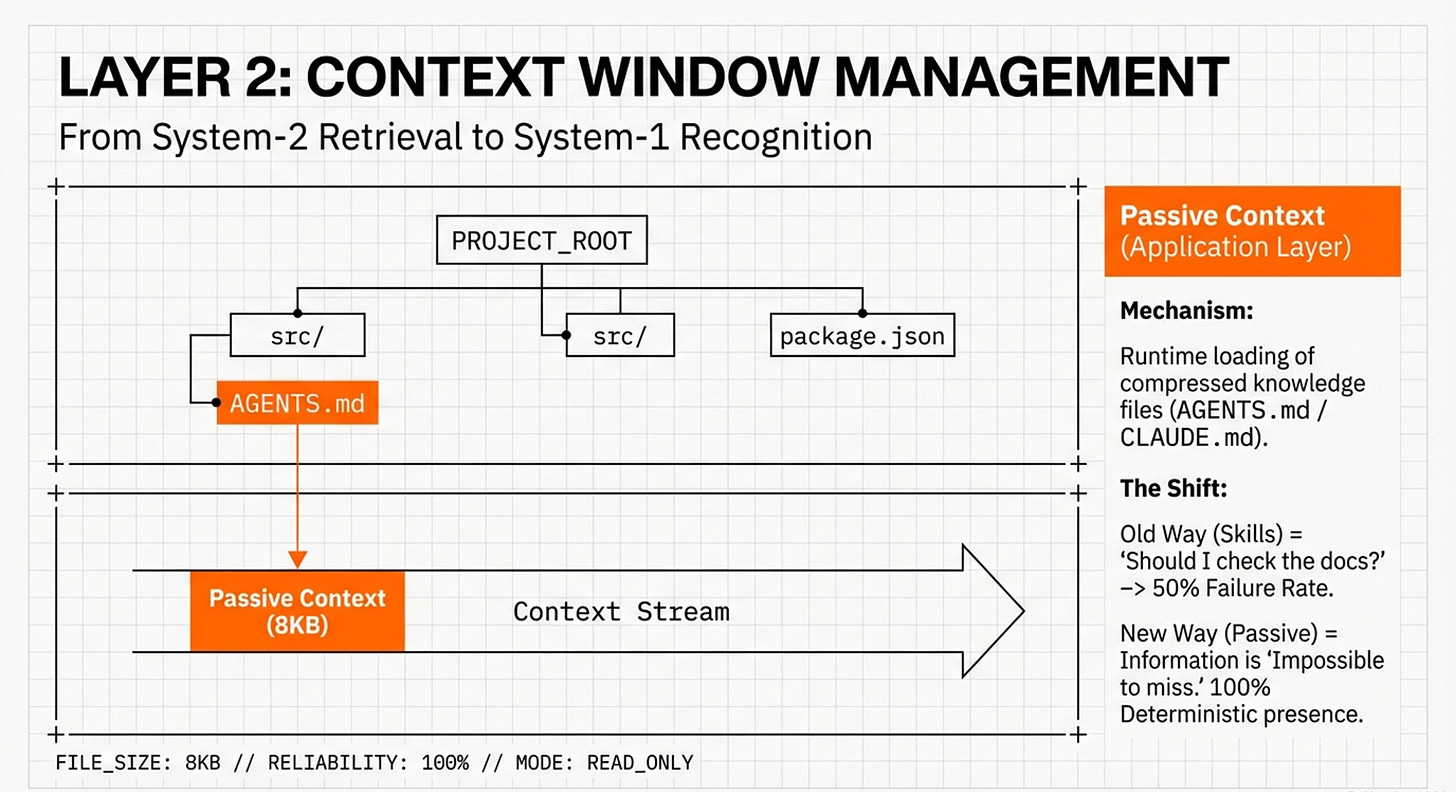

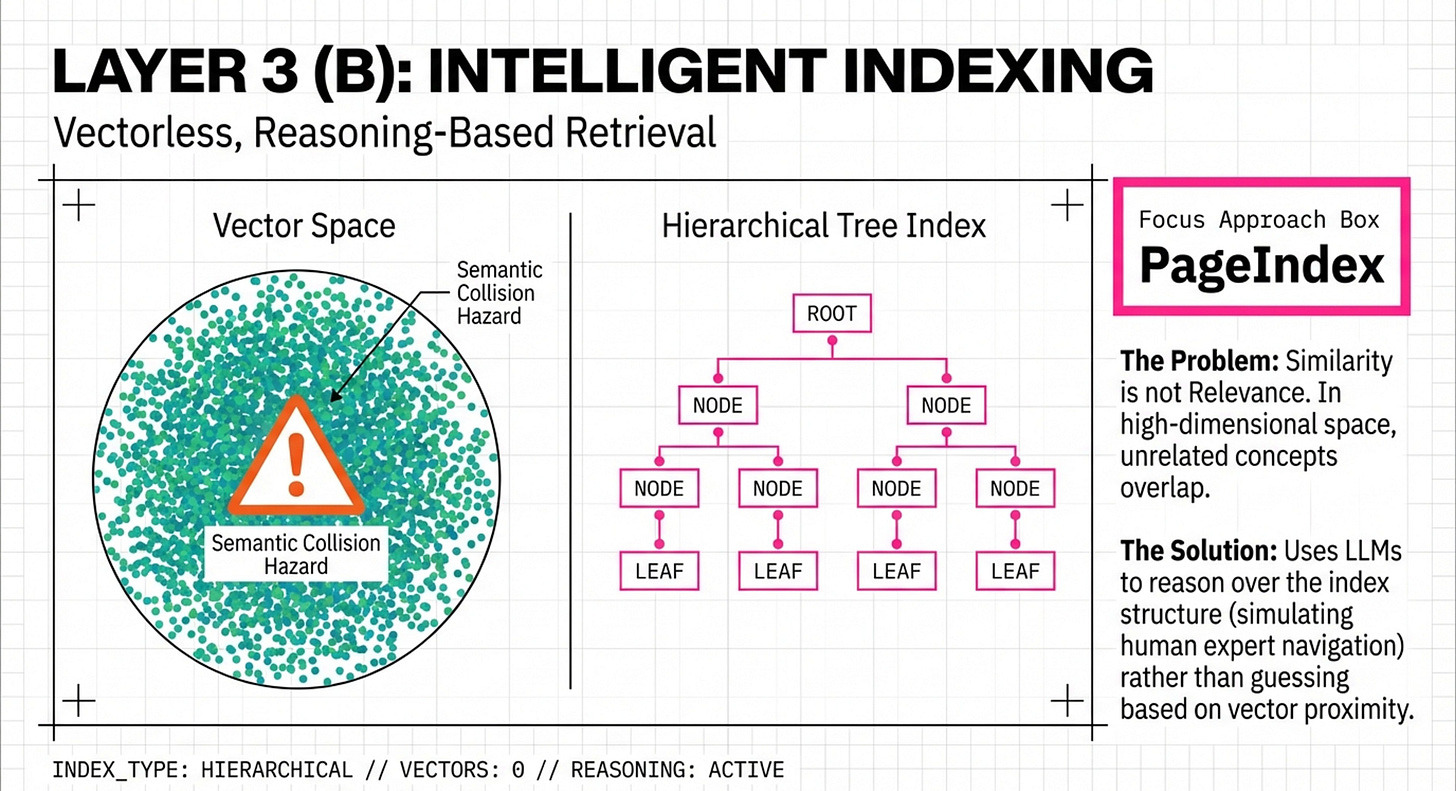

Systems like PageIndex and AGENTS.md use "Intelligent Indexing" (hierarchical trees and pipe-delimited maps) to simulate human expert navigation. What’s exciting is that we’re seeing shifts in tasks from mathematical "vibe" checks to engineering-grade, traceable reasoning paths. Evolving space, no doubt, but the leaps we’re witnessing are inspiring, to say the least:

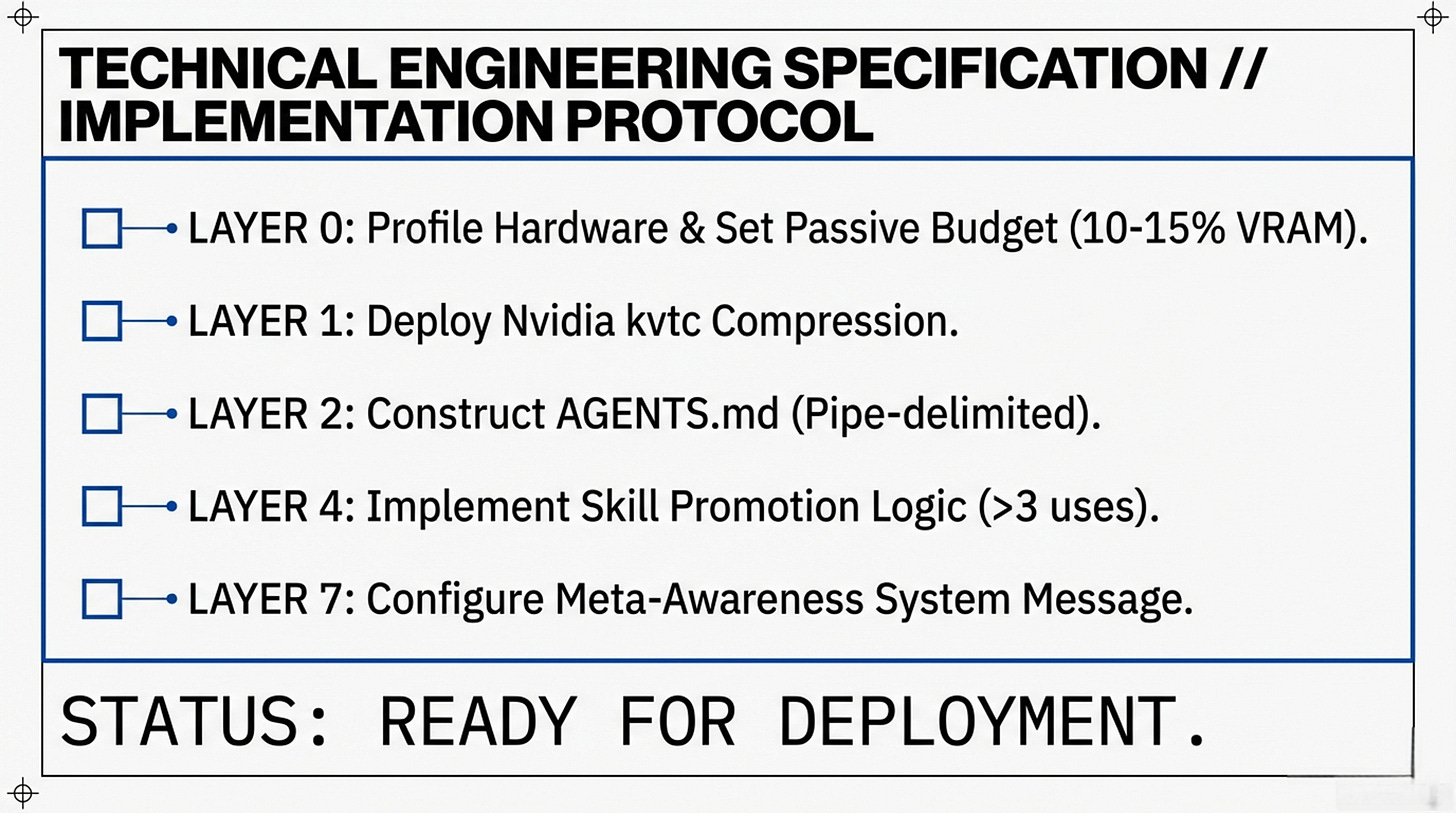

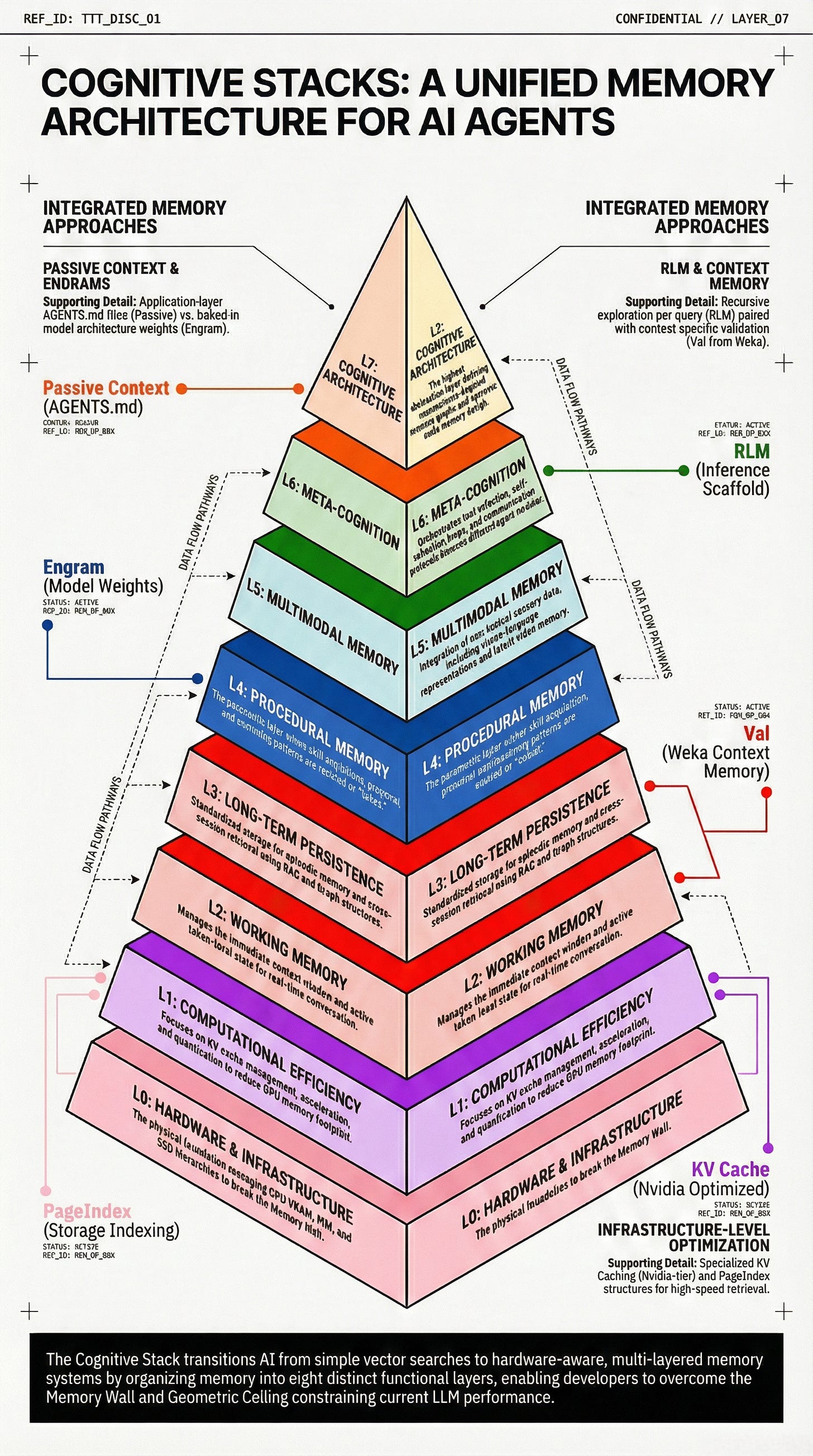

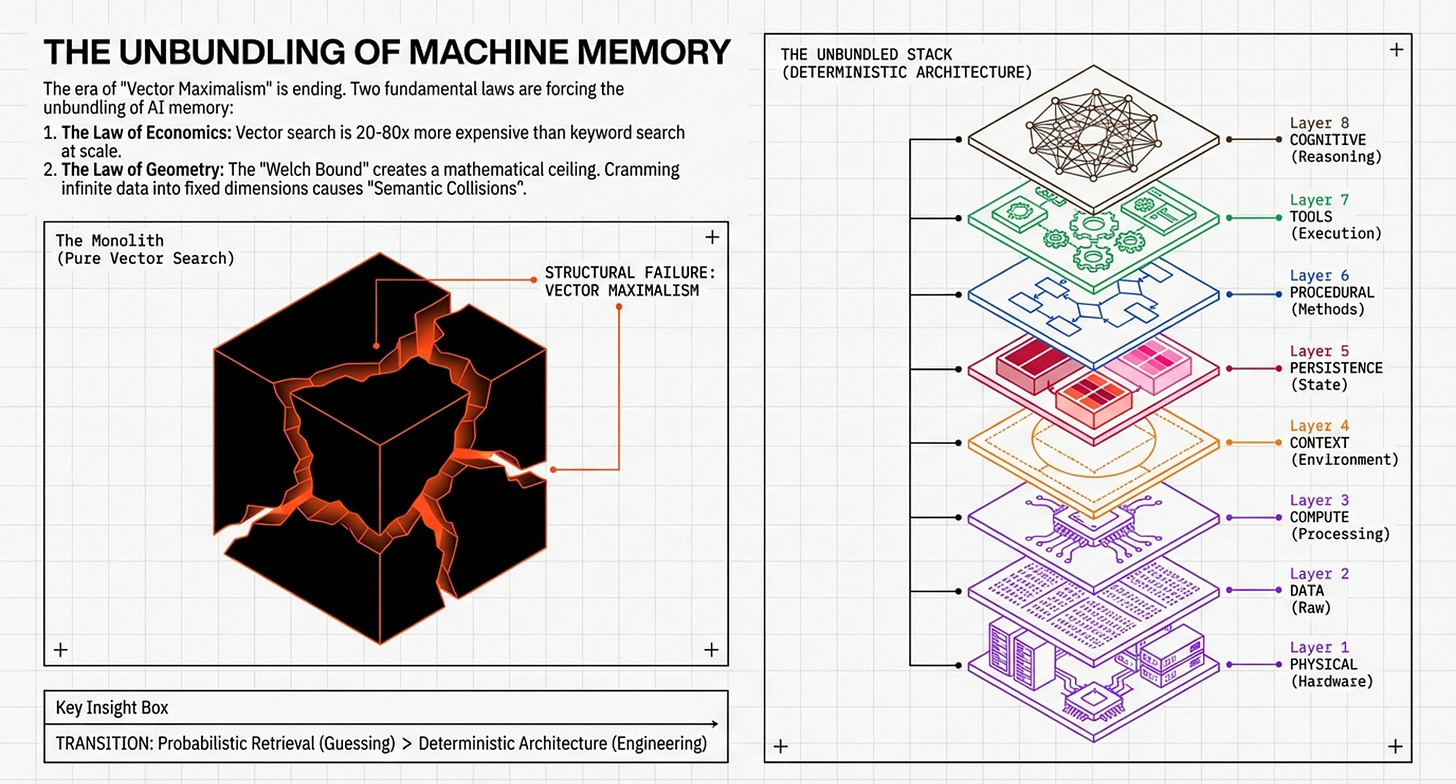

The slight differences you will see in the visualizations below reflect two distinct but complementary ways of viewing the 0-7 Layer Memory Framework. While they share the same underlying logic, they are designed to answer slightly different questions: "Can we physically run this?" versus "How do we make it intelligent and reliable?"

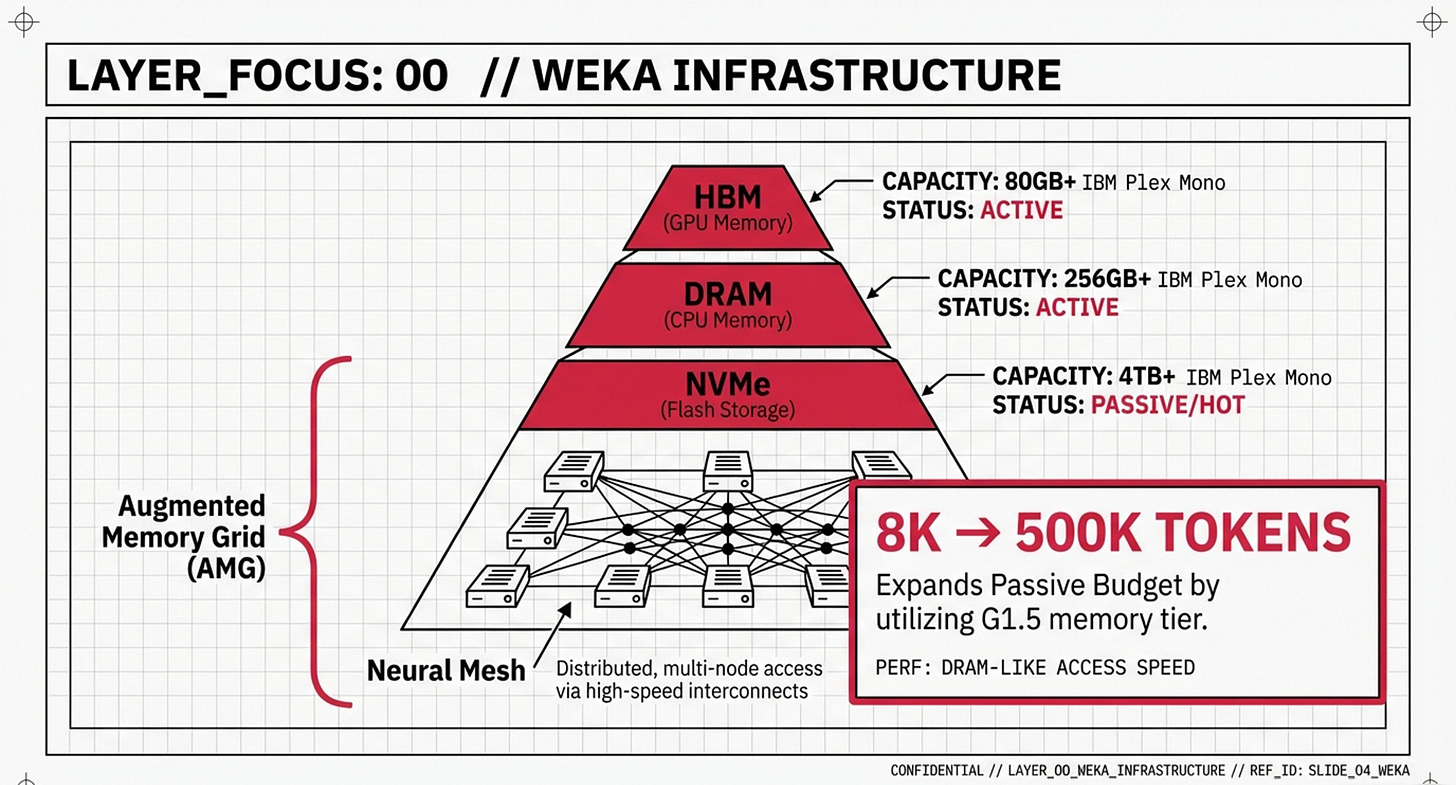

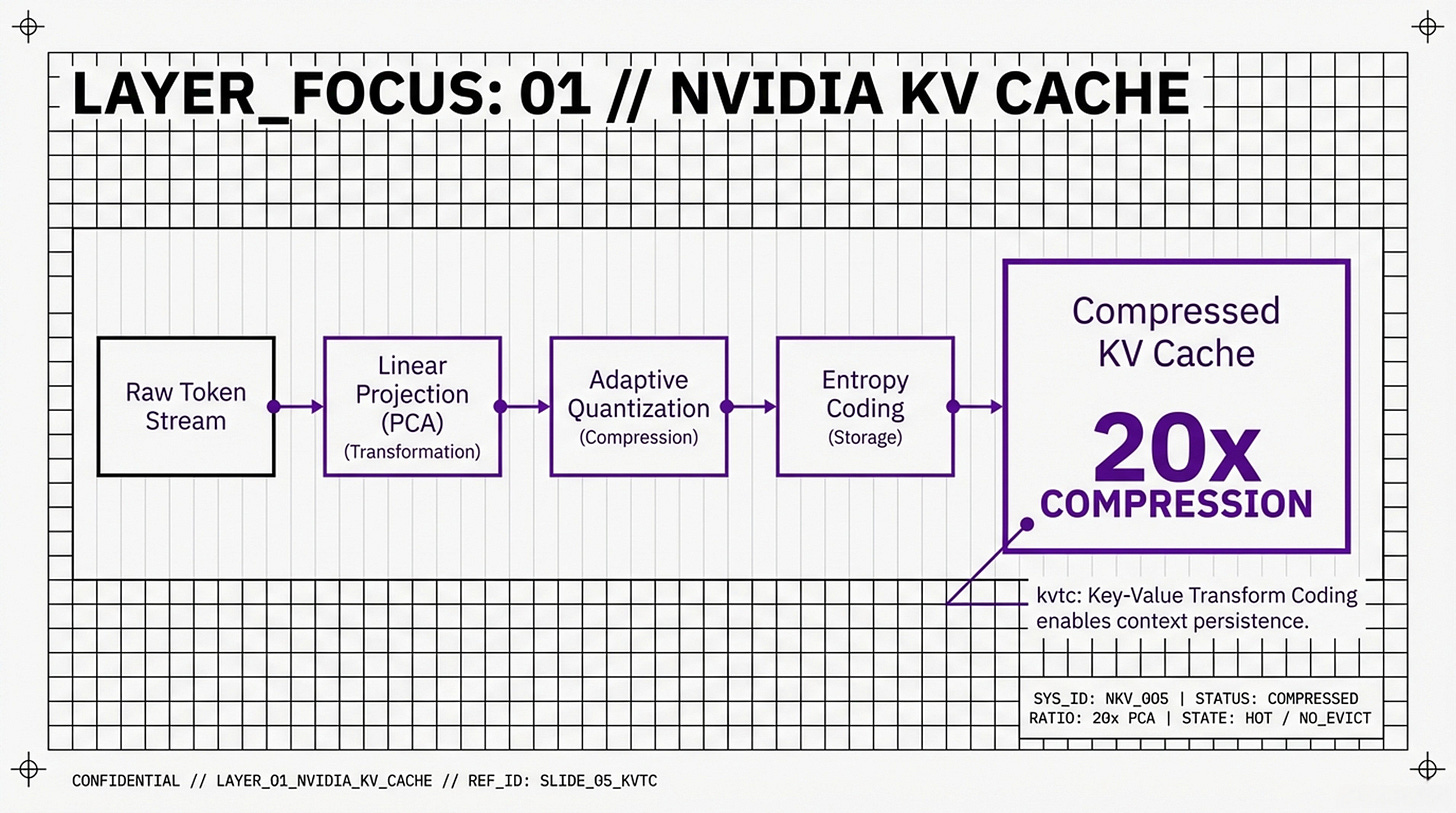

The Hardware-Software Synthesis (The Engineer’s Blueprint)

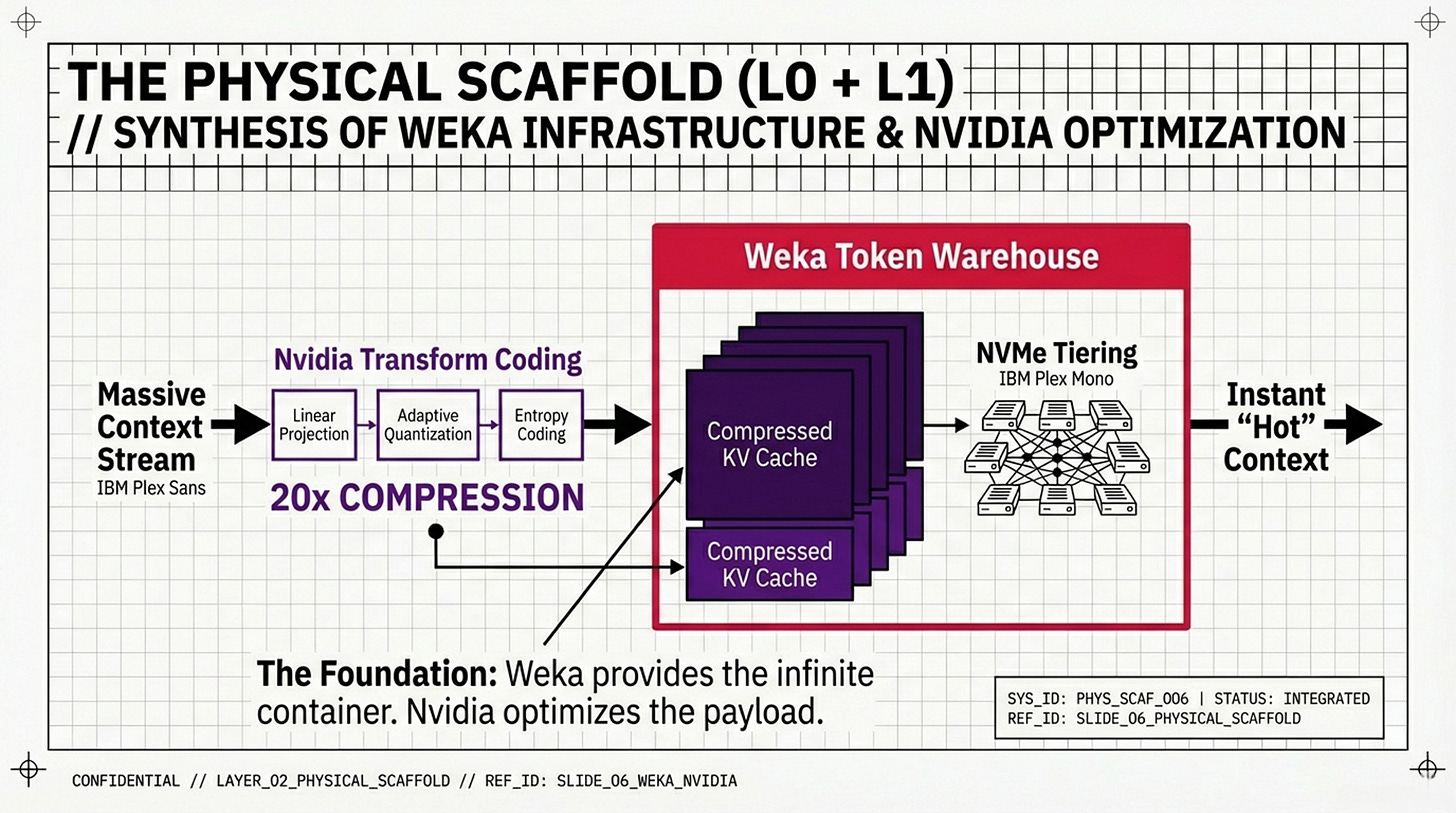

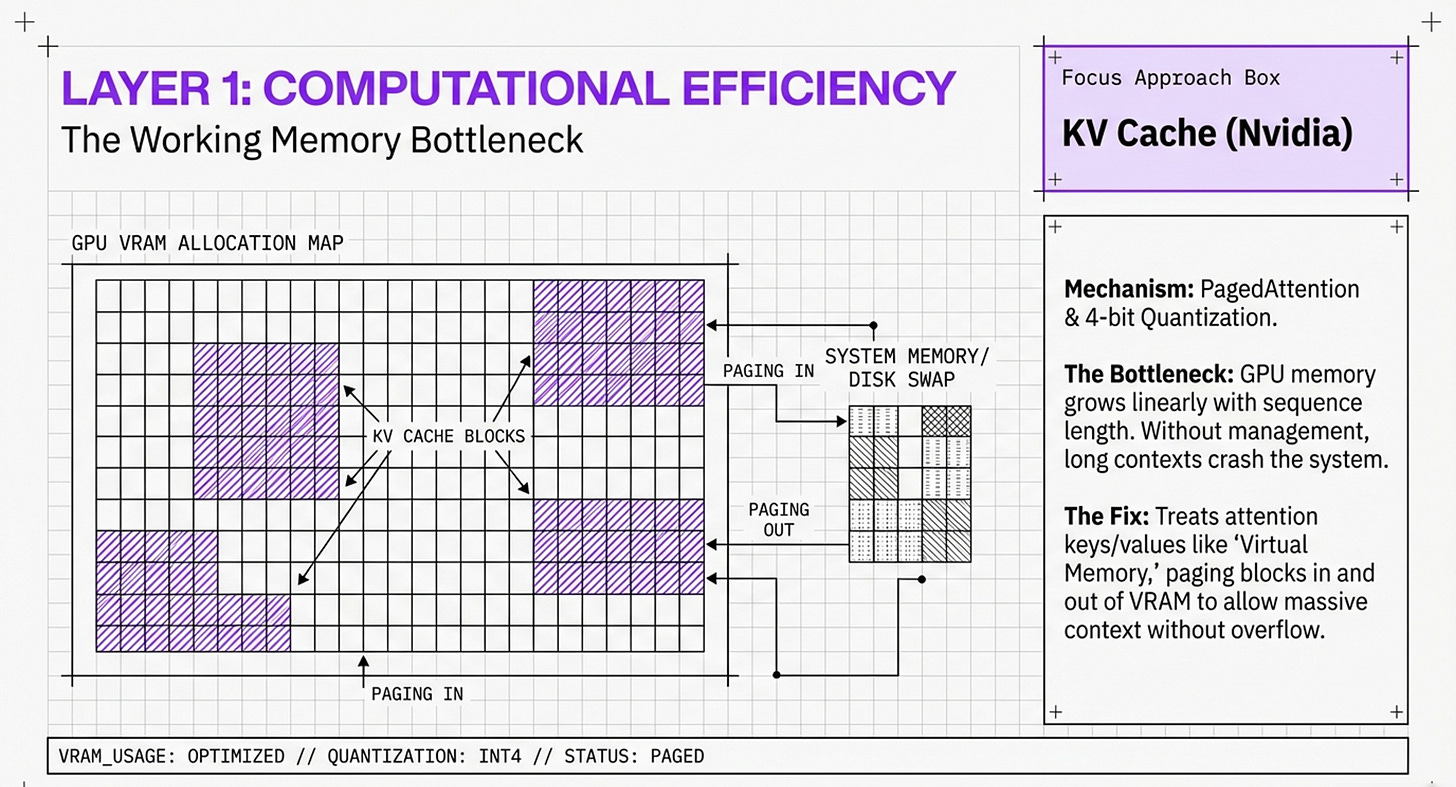

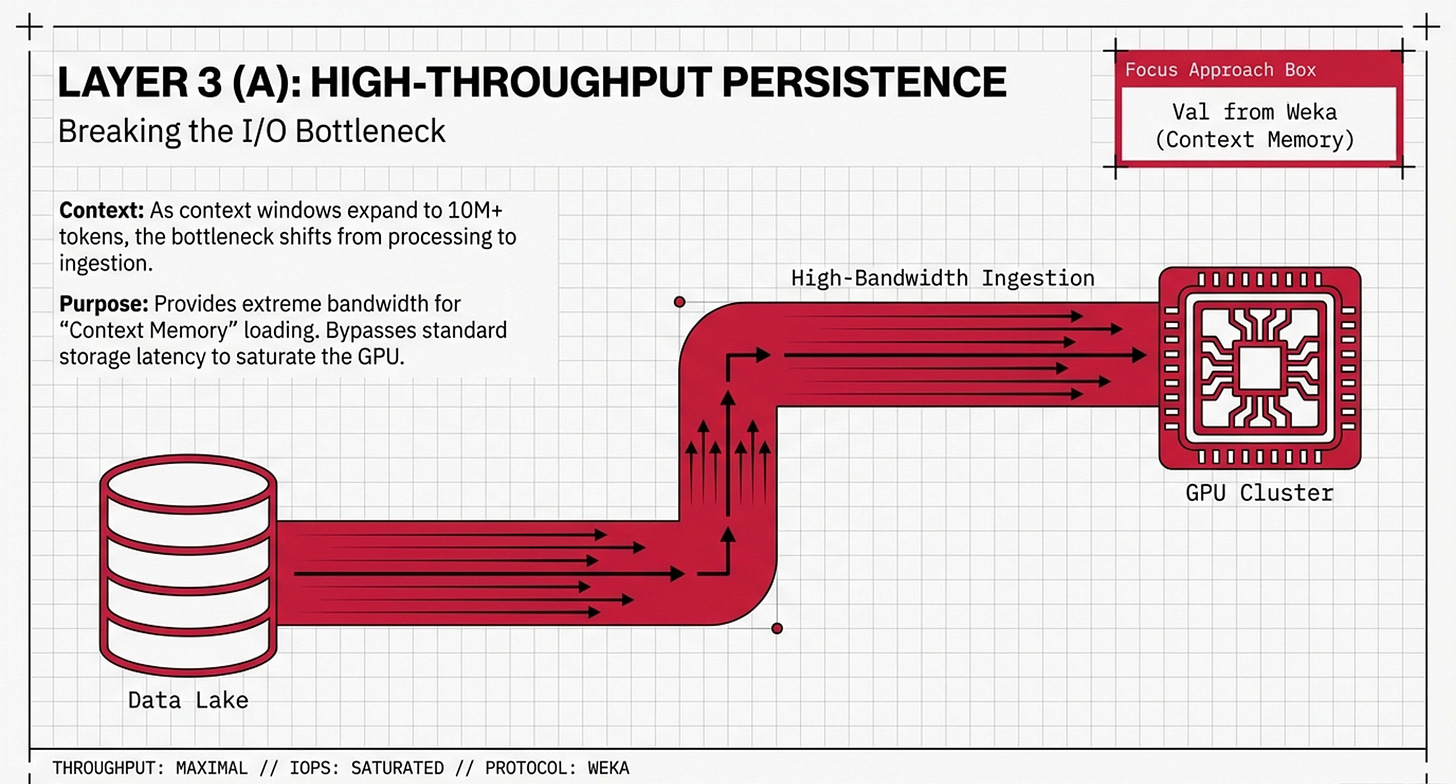

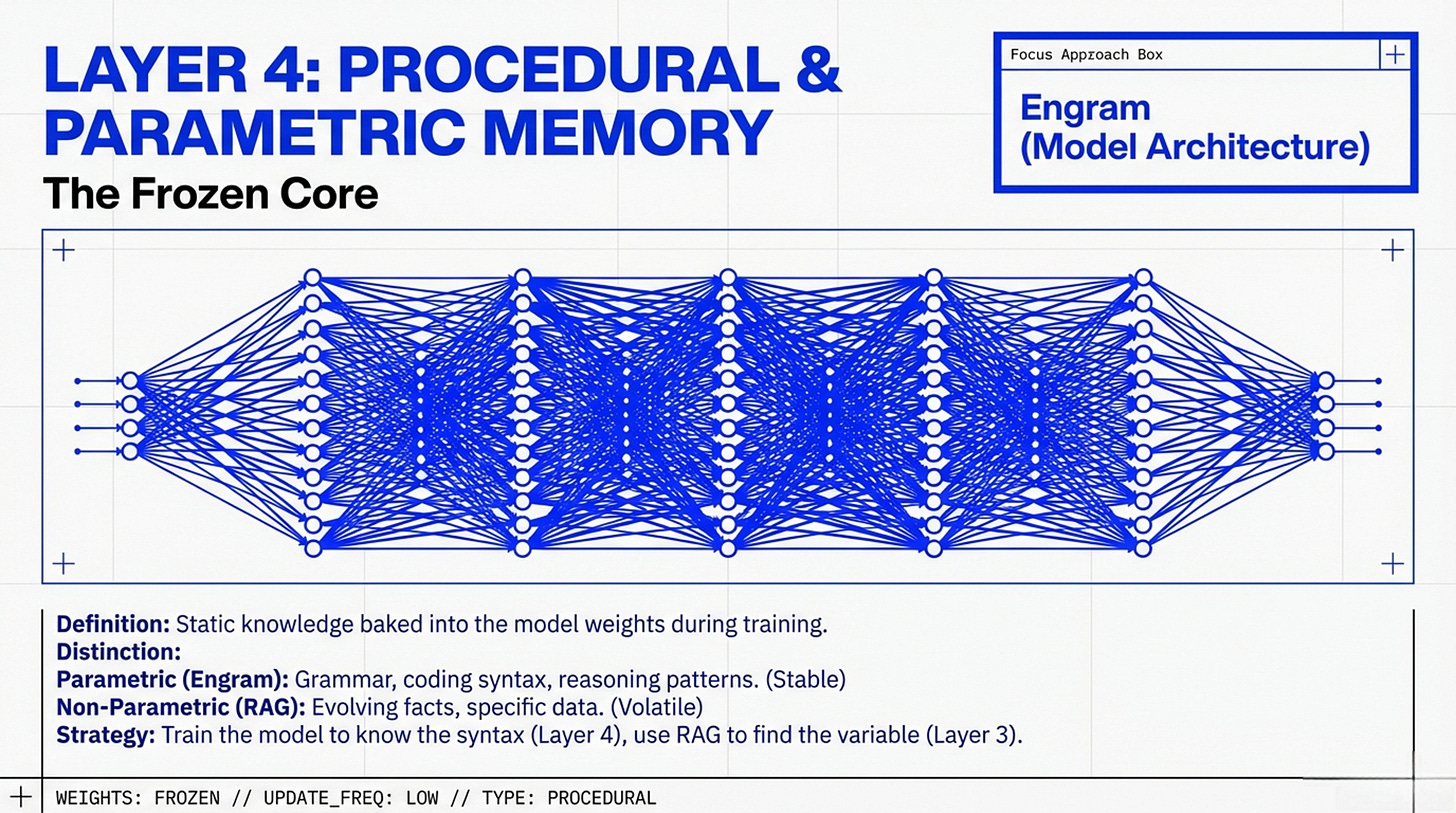

You will see a bottom-up pyramid that anchors AI reasoning directly into physical silicon. This infographic focuses on the “Physical Scaffold” of the memory wall, illustrating how high-level cognitive functions are literally supported by Layer 0 (Hardware Infrastructure) and Layer 1 (Computational Efficiency). The slight difference in this representation is its emphasis on capacity budgeting—mapping techniques like Nvidia KV Cache compression and Weka’s tiered storage directly onto VRAM and DRAM limits. It presents a synthesis where “Passive Context” and “Recursive Language Models” (RLMs) are not just software tricks, but strategic allocations of a device’s physical memory budget. This is the view for those who need to understand how to bypass the “PCIe tax” and hardware bottlenecks to make 24/7 agents a physical reality:

The Unbundling of Machine Memory (The Architect’s Strategy)

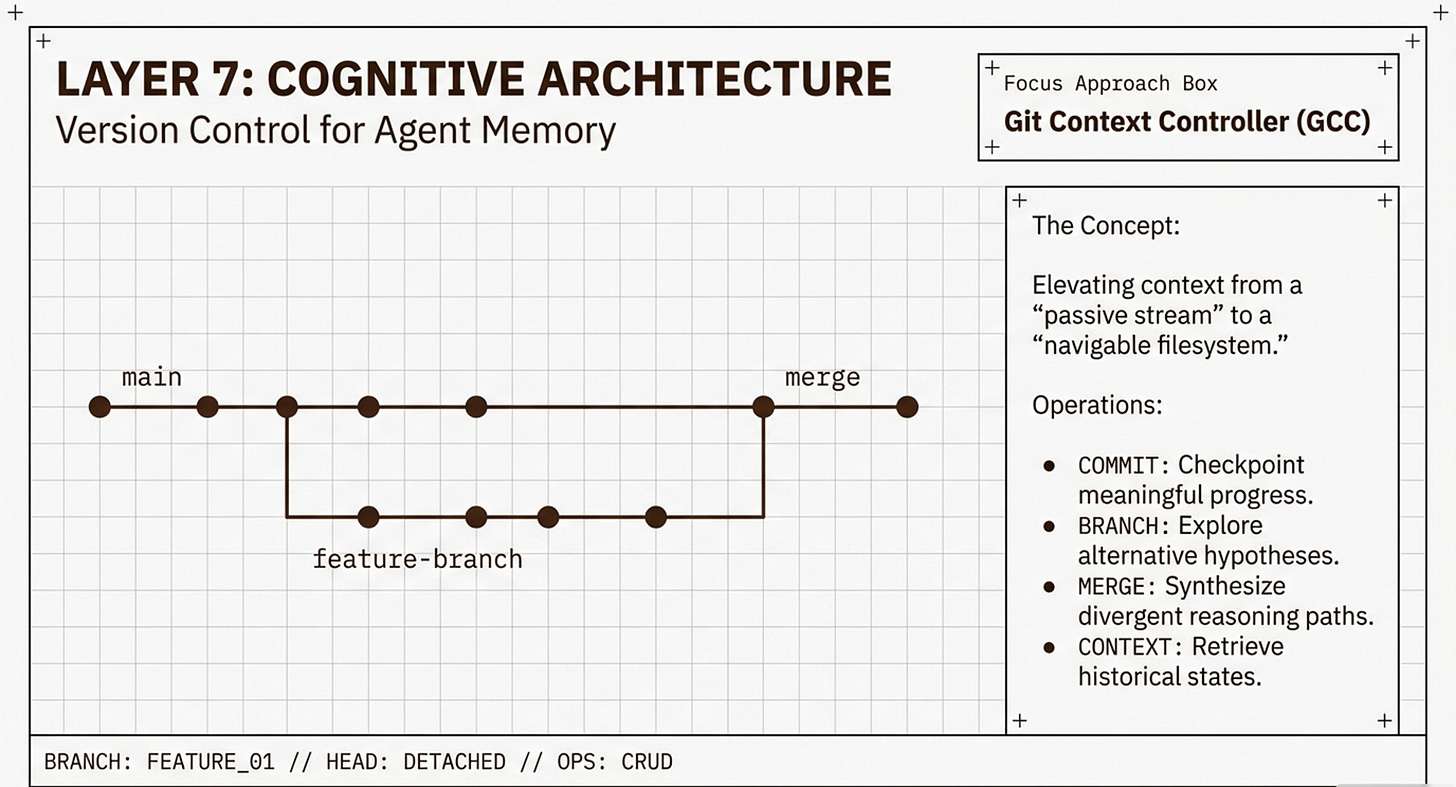

This representation focuses on the functional evolution of agent intelligence and the transition away from monolithic “Vector Search”. You will observe a “broken cube” metaphor, representing the collapse of “Vector Maximalism” under the pressure of two fundamental laws: the Law of Economics (costs) and the Law of Geometry (precision limits/Welch Bound). Unlike the pyramid focus on silicon, this view emphasizes the Information Architecture (Layers 2-7), showing how tools like PageIndex and the Git Context Controller (GCC) “unbundle” memory into structured, navigable, and versioned artifacts. This is the strategic view for those looking to move AI from “probabilistic guessing” to “deterministic engineering,” ensuring that an agent can manage its own memory through CRUD operations (Create, Read, Update, Delete) without suffering from “context rot”.

Summary of the Difference

• 1 explains the foundation: It shows how hardware enables the software (e.g., how Nvidia compression “keeps context hot” in VRAM).

• 2 explains the orchestration: It shows how the software manages the information (e.g., how GCC elevates context into a “navigable filesystem” at the top of the stack

Your layered approach brilliantly bridges hardware constraints with deterministic agent memory, making complex AI architecture both tangible and actionable